Quick Summary: This guide is for fleet managers, safety directors, and technologists interested in learning about AI video telematics software in 2026. This guide will walk you through the tech stack, safety benefits, live examples of the largest fleets in the world, implementation plan, how to determine your return on investment, and privacy considerations. They will all be actionable, whether you’re a small fleet of 10 vehicles or a large one of 10,000.

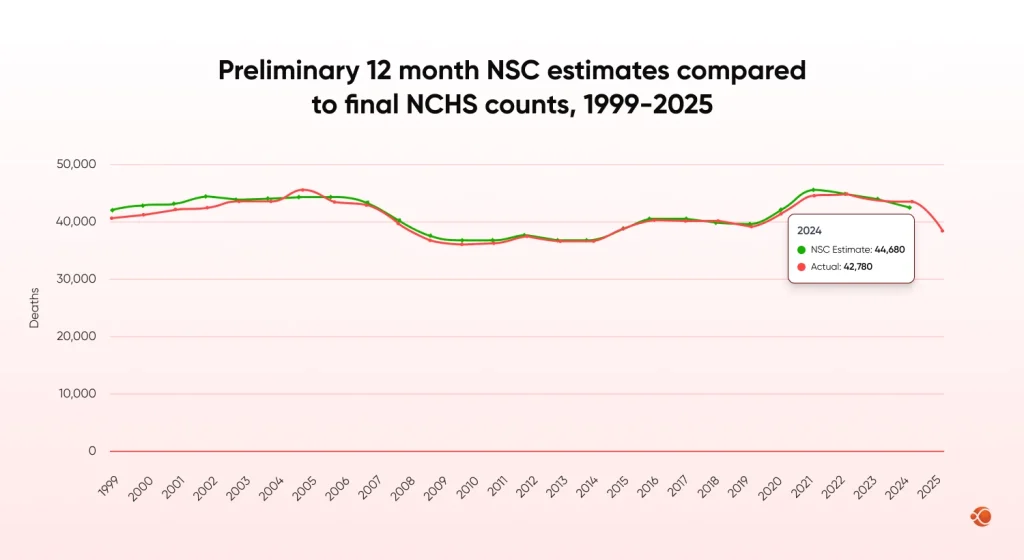

Video telematics, powered by AI, is now available beyond the pilot phase and into production-ready infrastructure. Initial 2025 data from the FMCSA’s carrier safety measurement system indicates that motor vehicle fatalities in the United States have fallen 12% to 37,810. Safety technology played an important role in reducing that number. However, the number still equates to an enormous cost for businesses of all sizes.

In 2026, edge AI maturity, 5G connectivity, and tightening regulations are aligning to make AI-powered dashcams and driver monitoring systems a competitive necessity rather than an optional upgrade. This guide gives you the framework to act.

Read on to get answers to these questions:

- What is AI Video Telematics, and how does it differ from GPS tracking?

- Why is 2026 the year for fleet-wide deployment of AI Video Telematics?

- How does Edge AI eliminate the need for costly data plans for real-time driver alerts?

- What safety benefits does AI Video Telematics provide, including collision avoidance, fatigue detection, and distraction detection?

- What key performance indicators are most important, and how does one calculate the ROI?

- How have UPS, Werner Enterprises, Amazon Logistics, and Samsara deployed it at scale?

- How do you run a pilot and scale deployment successfully?

- What privacy laws, GDPR rules, and FMCSA obligations apply?

- How does CMARIX help fleets build custom AI video telematics software?

What AI Video Telematics Is and Why 2026 Is the Turning Point

AI video telematics is a system that combines computer vision, vehicle sensors, and real-time analytics to detect driving risks and enhance safety. In 2026, the adoption of edge AI and regulations from the federal motor carrier safety administration will boost AI video telematics adoption.

Four Core Components

AI video telematics is a system that combines video recording and real-time AI inference. A contemporary system is based on the following four layers:

- Cameras in the cabin and outside the cabin. High-definition dual-channel cameras capture the driver and the road simultaneously.

- ADAS sensors. Forward collision warning, lane departure warning, and blind spot warning signals are activated regardless of cloud connectivity. Proper ADAS calibration software ensures sensor accuracy is maintained after every windshield repair or vehicle service.

- Edge AI processors. Edge devices are used to process AI inferences in real time with sub-second latency. CMARIX develops custom TensorFlow Lite models for edge devices.

- Cloud analytics. Dashboarding layers correlate video events with GPS, ELD, and fuel data to create safety intelligence.

Why 2026 Is the Tipping Point for AI Video Telematics

| Driver | What Changed | Fleet Impact |

| Hardware maturity | Dashcam chips reached commercial scale | Affordable, easy to install at volume |

| 5G expansion | HD video streaming viable on most corridors | Richer analytics, lower data cost |

| AI model availability | Pre-trained behavior models broadly accessible | Faster deployment, lower dev cost |

| Regulatory pressure | FMCSA and EU raising safety reporting floors | Video evidence becoming a compliance necessity |

| Insurance incentives | Carriers offering verified premium rebates | Direct financial return on deployment cost |

The technology of AI video telematics is shifting from being an optional fleet technology to a vital operational need. The developments in camera technology, edge AI chip technology, and connected network infrastructure are driving the feasibility and cost-effectiveness of the widespread adoption of AI video telematics technology. Furthermore, safety regulations and insurance programs are driving fleets to adopt driver-monitoring and verification systems powered by AI video telematics.

The Federal Motor Carrier Safety Administration is raising safety reporting standards for the transportation industry, and insurance companies are offering quantifiable rewards to fleets that use verified AI video telematics safety solutions. With the availability of pre-trained AI models for driver behavior detection and the expansion of 5G network coverage, AI video telematics is expected to transition from an optional technology to a standard fleet management tool in 2026.

AI Video Telematics vs. GPS Telematics

GPS answers: “Where was the truck?

“ AI video telematics answers: “What was the driver doing before the near-miss, and how do we prevent it from happening again? “

Here are the main differences between AI video telematics and GPS telematics:

| Capability | GPS Telematics | AI Video Telematics |

| Driver behavior detection | No | Yes, real-time |

| Fatigue and distraction alerts | No | Yes, DMS-based |

| Video evidence for claims | No | Yes, timestamped |

| Predictive risk scoring | No | Yes, ML-driven |

| Insurance premium integration | Limited | Yes, data-verified |

The GPS telematics system primarily focuses on vehicle tracking, routes, mileage, and location history. However, this system does not provide insights into driver behavior or the root causes of safety incidents.

AI video telematics systems have also been developed, using computer vision and machine learning to comprehend events within the vehicle. Such systems enable the fleet to detect driver behavior, thereby improving safety. Various regulatory guidelines issued by the FMCSA have also led to the adoption of more sophisticated driver safety monitoring systems.

The Technology Stack Behind a Successful AI Video Telematic Software

Edge AI: Lower Latency, Lower Cost

Edge AI dashcam integration is the defining hardware shift of 2026. On-device inference cuts latency below 100ms, reduces cellular bandwidth by 60-80 percent, and keeps safety functions running offline. CMARIX leverages TensorFlow Lite to build these edge-optimized models for fleet clients. We take building scalable fleet management systems with the latest features and topmost security as a priority for all our clients.

Improved AI Models

The state-of-the-art YOLO and transformer models have achieved over 97% accuracy in detecting pedestrians and vehicles, even under adverse lighting conditions. Detection of microsleep, phone usage, gaze deviation, seatbelt violations, etc., is also performed using facial landmarks. The fusion of multiple camera views is also done to correlate the front, rear, and cabin views to provide descriptions of the incident.

5G Connectivity and Fleet Platform Integration

With the advent of 5G mid-band coverage, it is now possible to stream real-time HD clips on most commercial routes. H.265/HEVC codecs are used to reduce the file sizes of the clips to be uploaded to the cloud without compromising on quality. CMARIX’s engineering practice includes implementing real-time transport tracking technologies using event-driven architectures and API integrations with ELD, FMS, and insurance platforms.

Direct Safety Benefits and Practical Use Cases

Collision Prevention and Near-Miss Detection

The forward collision warning system provides a viable means for drivers to intervene, as it can detect at speeds of <200ms or less; however, near-miss detection is also an important metric, as it captures significant numbers of high-risk incidents where there are no reports on file. We know that fleets currently using deployed AI video telematics solutions can expect 20 to 40% fewer at-fault collisions in 12 months. Also, near misses occur 100-300 times more frequently than collisions; therefore, this is the most significant safety metric.

Driver Coaching, Fatigue, and Distraction Monitoring

With Automated Event Detection, the 45-minute weekly debriefs are now converted into 10-minute coaching sessions. Driver Monitoring Systems (DMS) use near-infrared cameras to detect the onset of fatigue 20 minutes before the driver admits to the same. Distraction detection, including phone use, eating, and lane deviation, is performed with frame-level accuracy. Hire mobile apps for drivers from CMARIX that integrate the coaching dashboard and training content.

Post-Incident Analysis and Liability Reduction

A video with time stamps displays IP addresses and provides information about where you were (e.g., your location) and how fast you were driving, along with information about abrupt stops by the vehicle. This information enables claims to be processed within days rather than months.

The Insurance Institute for Highway Safety (IIHS) points out that having video evidence (from a verified camera) allows fleets to resolve claims more quickly, resulting in lower settlement amounts if the claim is settled based on video evidence proving faultless or otherwise.

Real-World AI Video Telematic Deployments by Major Fleet Operators

Major fleet operators are already using AI video telematics on a large scale to enhance safety and driver accountability. Below are some examples of how major logistics operators are using AI video cameras in 2024 and 2025.

| Company | Deployment Focus | Key AI Capability | Safety Impact |

| UPS | Prevent backing accidents in urban delivery routes | Multi-angle AI cameras detect obstacles during reverse and trigger in-cab alerts | Reduced backing accidents and faster insurance claim resolution using video evidence |

| Werner Enterprises | Fleet-wide driver coaching for Class 8 trucks | AI video telematics with rolling driver safety scores and event detection | Lower preventable accidents per million miles and improved safety score profile with proactive coaching |

| Amazon Logistics | Risk monitoring for last-mile delivery fleets | Real-time trip scoring for speeding, harsh braking, distractions, and tailgating | Improved driver accountability and long-term reduction in at-fault crashes through AI coaching programs |

UPS: Cutting Backing Accidents with Multi-Angle AI Cameras

To address the devastating number of urban delivery accidents caused by backing, UPS installed multi-angle AI camera systems in all its delivery vehicles. These systems can detect obstacles while in reverse and use an on-board AI model to send a warning to the driver in the cab only seconds before the vehicle hits the obstacle. According to UPS, its pilot program resulted in a significant reduction in backing accidents; therefore, they have expanded it to other depots. The use of video evidence has also shortened the time it takes to settle insurance claims.

Werner Enterprises: Proactive Coaching at Scale

To transition from reactive safety management practices to a proactive safety coaching approach, Werner Enterprises implemented an AI-based video telematics system across its Class 8 truck fleet. Drivers receive rolling safety scores that are visible to both the driver and their coach. Werner Enterprises saw a decrease in preventable accidents per million miles once the program matured and improved its overall FMCSA CSA score profile. In addition, the frequency of discussions between coaches and drivers shifted from a punitive incident-reporting process to evidence-based methods.

Amazon Logistics: Trip-Level Scoring for Last-Mile Delivery

To address the extremely high number of pedestrian and cyclist exposures associated with last-mile urban deliveries, Amazon Logistics implemented AI dashcam systems across its Delivery Service Partner fleet. Each trip will be rated in real time for speeding, harsh acceleration/deceleration, and any other unsafe driving behavior.

Amazon Logistics used AI dash cam technology for its Delivery Service Partners to mitigate the high exposure of pedestrians and cyclists in urban last-mile delivery. Each trip is scored in real-time for speeding, hard braking, hard cornering, distractions, and tailgating. Aggregated scoring is used for the driver ranking system for route allocation. An analysis conducted in 2024, based on patterns established by the Insurance Institute for Highway Safety, confirmed that any driver program utilizing AI coaching results in long-term reductions in at-fault crashes over 12-month periods.

Bonus: Samsara: Cross-Industry Safety Outcomes at Scale

In its Fleet Safety Report for 2025, Samsara analyzed data from thousands of fleets that have used its AI Video product. The analysis shows some very interesting results:

| Metric | Baseline / Early Deployment | AI Platform Outcome |

| Crash rate (short term) | Normal crash levels before AI monitoring | Reduced by 37% within 6 months |

| Crash rate (long-term) | Typical industry accident rates | Reduced by about 73% over 30 months |

| Harsh driving events | Frequent sudden braking, acceleration, and risky maneuvers | 48% drop in 6 months, 69% drop in 30 months |

| Mobile phone use while driving | Drivers occasionally using phones behind the wheel | 84% reduction in 6 months, reaching 96% reduction over 30 months |

The same model is seen in all implementations. Real-time in-cab alerts are enabled by Edge AI, coaching workflows provide helpful feedback to drivers, trip-level scoring tracks driving habits, and captured video is integrated into the claims process, allowing fleets to improve safety and speed up accident resolution.

CMARIX builds custom AI video telematics software, driver apps, and backend data pipelines for fleet operators.

Contact UsThe Fleet Safety KPIs That Actually Predict Risk in 2026

| KPI | Type | Target |

| Crash rate per million miles | Lagging | Below FMCSA fleet average for your segment |

| Near-miss frequency per 1,000 hours | Leading | Down 20% within 90 days of deployment |

| Coaching completion rate | Process | Above 80% within 72 hours of flag |

| Driver safety score distribution | Leading | No driver below 60th percentile for 3+ months |

| False positive alert rate | Quality | Below 15% of all flags |

Predictive Risk Scoring and Dashboard Design

The CMARIX enterprise AI software solutions uses artificial intelligence to build predictive risk engines. These engines are created by analyzing a rolling 90-day history of driver events, mapping route-level risks by segment and time of day, and determining the level of individual driver fatigue based on their DMS baseline.

For the dashboards to be effective, they should highlight the drivers and routes that cross the threshold, provide views of operations and safety, and send the weekly digest to users who will not log in proactively. Hire dedicated AI developers for computer vision for handling retraining cycles to prevent model degradation after initial deployment.

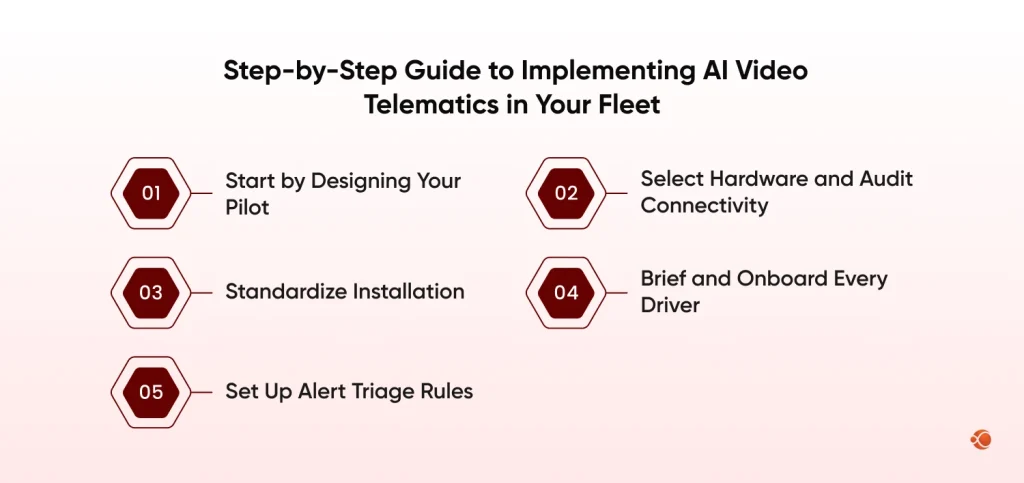

Step-by-Step Guide to Implementing AI Video Telematics in Your Fleet

1. Start by Designing Your Pilot

| Parameter | Recommendation | Why |

| Fleet size | 20 to 50 vehicles | Enough data for statistical significance |

| Duration | Minimum 90 days | Shorter pilots lack incident-level data |

| Driver selection | Mix of high-incident and baseline performers | Enables meaningful comparison |

| Success criteria | Pre-defined before pilot begins | Avoids post-hoc rationalization |

2. Select Hardware and Audit Connectivity

Compare your dash cam’s specifications with the requirements for both camera angles and night vision, then determine whether tamper resistance is required for the device. Assess cellular signal strength along all primary roadways and in surrounding areas to ensure edge devices have sufficient storage capacity when the signal is weak or absent.

CMARIX develops driver apps that work in low-signal areas. We have developed real-time fleet management solutions, including a real-time mobile school bus tracking app. Both of these systems provide live vehicle tracking, routing visibility, and passenger coordination for connected transport systems.

These projects demonstrate how a robust back-end system and a mobile application can support specialized fleets while maintaining data integrity/synchronization.

Modern fleets often combine AI dashcams with fleet management software development platforms that centralize driver behavior data, GPS tracking, compliance records, and telematics analytics into a single operational dashboard.

3. Standardize Installation

Prior to signing off on any installed camera systems, ensure there are installation SOPs, Quality Assurance Photo Checklists, and verification of all camera mounting angles. The leading cause of model accuracy issues in production is improper system mounting. All final sign-offs will be performed with two individuals: the installer and a Safety Team representative as verifier.

4. Brief and Onboard Every Driver

Before the operation begins, talk with every driver separately about which aspects will be watched or checked; whether there are certain factors that will not be monitored; the way in which all the information will be used to provide coaching versus being used to impose discipline; and the difference in how the camera is used for safety instead of simply surveilling.

Drivers can be motivated to change their behaviour through incentives, such as high safety scores, rather than punishment-based incentives or punishments, which often take longer to produce behavioural change.

5. Set Up Alert Triage Rules

Alert fatigue kills adoption. A system firing 50 alerts per vehicle per day will be ignored within two weeks. Before going live, define your three-tier triage rules so every alert has a clear owner and response time:

| Tier | Event Types | Response | Timeframe |

| 1 (Automated) | Lane departure, following distance | In-cab alert only | Immediate |

| 2 (Manager Review) | Phone use, microsleep, hard braking | Coaching session scheduled | Within 24 hours |

| 3 (Escalation) | Collision, unauthorized vehicle use | Safety director notified | Immediate |

If you follow all 5 steps before putting your fleet systems into production, you’ll have a technically sound, operational fleet system that drivers will feel comfortable using. Many fleets that skip Step 3 (Testing) or Step 4 (Training) experience alerts that aren’t as accurate as expected.

Need a Custom Telematics Backend or Driver App?

CMARIX builds edge AI models, backend data pipelines, and mobile driver apps for fleet operators.

From Safety Investment to Financial Return: AI Video Telematics ROI

AI video telematics can be considered both a safety-driven tool and a tool with a significant financial impact. When fleets use these systems to reduce risky driving behavior, they also reduce their accident costs, insurance liability, fuel usage, and driver turnover. Therefore, there is a system that enhances safety while remaining cost-effective, delivering proven operational savings.

Five Tangible ROI Levers

| ROI Lever | How It Creates Financial Value | Estimated Impact |

| Accident cost reduction | Fewer at-fault incidents reduce repair, liability, and downtime costs. | ~25% fewer accidents can save $500,000+ annually for a 100-vehicle fleet (avg. accident cost ~$91K). |

| Insurance premium rebates and reduction | Insurers reward fleets that share verified telematics safety data and show improved driver behavior. | 5–15% lower premiums depending on carrier participation and safety performance. |

| Faster claim resolution | Timestamped video evidence clarifies fault quickly, reducing legal disputes and investigation time. | Claims often settle days or weeks faster, lowering legal and admin costs. |

| Fuel efficiency improvements | AI-driven coaching reduces harsh acceleration and braking, encouraging smoother driving patterns. | 3–7% improvement in fuel economy across most commercial fleets. |

| Driver retention savings | Coaching-focused safety programs improve driver experience and reduce turnover. | Avoid $5,000–$15,000 replacement cost per driver (recruiting, onboarding, training). |

Accident cost reduction. A 25% drop in at-fault incidents on a 100-vehicle fleet can represent $500,000+ in annual savings, given an average at-fault commercial truck accident cost of $91,000.

Sample Payback Model: 100-Vehicle Fleet

This example illustrates how AI-assisted video telematic systems are financially beneficial to a mid-sized fleet. The full range of assumptions used in this model is purposely understated as they establish an accurate baseline condition.

| ROI Component | Conservative Assumption | Annual Value |

| System cost | $50/vehicle/month x 100 vehicles | -$60,000 |

| Accident reduction (25% on 3 incidents at $91K avg) | Conservative baseline | +$68,250 |

| Insurance premium reduction (8% on $400K) | Participating carrier | +$32,000 |

| Fuel savings (4% on $800K annual fuel) | Mixed fleet | +$32,000 |

| Net annual benefit | – | +$72,250 |

| Payback period | – | ~10 months |

All assumptions used to develop these projections are conservative. Fewer accidents, more fuel spent, and a more generous insurance incentive program will allow a fleet to recoup its investment more quickly than one with average or below-average levels of these items. In most cases, fleets achieve full ROI in less than 6 months.

Navigating Privacy Laws and Legal Risks in Fleet Video Monitoring

Fleet operators should consider privacy and regulatory issues from the outset when developing and deploying an AI video telematics solution to manage fleet safety.

Implementing proper governance policies, raising awareness of applicable laws and regulations, and developing processes that protect driver rights while preserving the legal evidentiary value of recorded data will allow fleet operators to create a secure environment for their video telematicsfl system.

Driver Privacy and Data Governance

Prior to activating any new camera, an established and published written policy should be in place that outlines retention periods (your standard operational retention is for 30-60 days for non-event footage), who has access to view the data, as well as what will be done with the data through the coaching process.

Regulatory Obligations in 2026

| Regulation | Jurisdiction | Key Fleet Obligation |

| GDPR | EU / EEA | Document lawful basis for video collection of identifiable individuals |

| DSA-GDPR Guidelines (March 2025) | EU | Automated detection systems must comply with GDPR data minimization rules |

| CCPA / CPRA | California | Biometric DMS data requires specific disclosure and opt-out mechanisms |

| FMCSA HOS / ELD | USA | Video data must be compatible with CSA score documentation |

The EU EDPB guidelines from March 2025 address the interplay between the Digital Services Act and the GDPR for automated detection systems, which are directly relevant to AI-powered Driver Monitoring Systems deployed in Europe.

Legal Evidence Handling and Ethical Use

To provide proof that video files have not been altered, create a hash for each file at the time of recording. Video files should not be disposed of automatically immediately following an event for the duration of the applicable statute of limitations. Legal counsel must review any video before it is made available for discovery. AI scores can only be used as a basis for coaching; however, all disciplinary action will be based on a human review of the video and its context. Drivers should have the opportunity to view and question their event video.

Thinking About AI Digital Transformation for Your Logistics Operation?

Learn how CMARIX helps logistics and transport companies integrate AI into operations at scale.

Top Questions About AI Video Telematics in the Fleet Management Industry

What is AI video telematics software, and how is it different from a dashcam?

A dashcam records but requires manual review. AI video telematics software automatically detects, classifies, and alerts on driver behavior events and aggregates data across a fleet for coaching, analytics, and risk management.

How mobile telematics unlock vehicle data beyond GPS?

Mobile telematics uses data from vehicle sensors, driver behavioral signals, and vehicle diagnostics. This provides insights into vehicle driving habits, engine health, safety incidents, and vehicle operational efficiency, in addition to location tracking.

What is the role of AI in digital transformation for logistics?

AI enables logistics providers to leverage predictive analytics, route optimization, driver safety monitoring, and automated decision-making. These types of AI tools, combined, will allow logistics companies to save money while increasing operational visibility and improving the efficiency of the delivery process.

Can AI video telematics apply to school buses and specialized vehicles?

Yes, AI video telematics can help to monitor driver behavior, detect safety-related risks, and protect students. AI video telematics is beneficial for school buses and specialized fleets by providing real-time alerts when an employee engages in unwanted behavior; video footage to support claims; and assistance with compliance with federal, state, and local regulations related to student transportation.

How does geolocation integration enhance video telematics for automotive platforms?

Automotive service platforms with geolocation integration provide context for the referenced video events. The geolocation relates the driving behaviors, incidents, and routes with all of the video data captured, which allows for the identification of high-risk areas, a more efficient analysis of how best to adjust the route based on driving behavior, and to investigate accidents by using location as part of the evidence to identify the cause of the incident.

Conclusion: Act Now or Fall Behind

In 2026, AI video telematics will be a necessary part of fleet managers’ success; it is now a reality in the competitive, safety realm for fleets. The technical barriers have been removed. What differentiates the best fleets is the quality of their implementation. Structuring pilot programs, coaching cultures, proactively compliant with privacy laws, and rigorous return-on-investment (ROI) modeling result in compound safety improvements over time.

The large-scale models have been proven by UPS, Werner Enterprises, Amazon Logistics, and Samsara’s network. The technology is available, the structure is in place, and the financial rationale is clear.

CMARIX delivers end-to-end fleet safety engineering: custom computer vision models, fleet management software development, mobile driver apps, scalable ride-hailing architectures, and backend telematics pipelines. If you are building or upgrading your fleet safety infrastructure, our team is ready to help.

The question for fleet managers in 2026 is no longer whether to deploy AI video telematics, but rather how to do so.

FAQS about AI Video Telematics in Fleet Management

How does AI video telematics reduce fleet insurance costs?

AI video telematics analyzes risky driving behaviors such as hard braking, distraction, and speeding. Fleets reduce accidents through real-time alerts and coaching, improving safety scores and enabling insurers to offer lower premiums.

Is driver-facing camera monitoring legal under GDPR in 2026?

The General Data Protection Regulation allows fleets to use driver-facing cameras as long as they can show they have a legitimate safety reason for doing so, have demonstrated transparency and minimal data collection, and obtained the driver’s consent, retention policies for the collected data, and reasonable privacy protections.

Can AI dashcams work in remote areas without 5G coverage?

That’s correct. AI dashboard cameras process footage using Edge AI hardware. Events are detected and stored locally; they are uploaded to the cloud once a reliable internet connection has been established, ensuring they function reliably (rather than using cellular data) on rural highways and in isolated areas where fleet routes operate.

What is the difference between passive and active video telematics?

Passive video telematics stores video for later review after incidents occur. Active Video telematics continuously reviews behavior and can send alerts for poor driving, such as following too closely, distractions, or sudden stops.

How long does it take to build a custom video telematics platform?

Depending on the features included (e.g., AI model training, video pipeline architecture, mobile applications, and fleet management system integrations), the average time to develop a custom solution is typically 3-6 months.