At-a-Glance View:- The EU AI Act is set for its full enforcement, and if your software touches EU users, you’re in scope regardless of where you’re based. This guide will explain the risk classification model in detail, what high-risk AI systems actually require, and who must comply. It also comes with a checklist to help you get ready in five phases.

August 2, 2026, isn’t just another regulatory date on the calendar. It’s when the full weight of the EU AI Act lands, and if your software interacts with EU users, it lands on you too.

The EU Artificial Intelligence Act is the world’s first comprehensive legal framework for AI. It officially entered into force on August 1, 2024, and has been rolling out in phases ever since. Prohibited AI practices became enforceable in February 2025. General-purpose AI model obligations kicked in by August 2025. And now the big one becomes fully enforceable on August 2, 2026. And with the EU committing EUR 4 billion for generative AI development by 2027, the regulatory framework and the investment appetite are moving in lockstep, making compliance less of a burden and more of a market entry ticket.

This isn’t optional. It doesn’t matter if you’re headquartered in Mumbai, Austin, or Toronto. If your software serves EU residents, you’re accountable under the law. For software development companies, particularly, this creates a real compliance window. Companies that treat this checklist as a genuine roadmap will be ready. Those who wait will be scrambling when enforcement begins.

This guide gives you a practical EU AI Act compliance checklist built specifically for software teams, with a phase-by-phase approach to getting audit-ready before the deadline.

EU AI Act: The Essentials at a Glance

- Full enforcement on high-risk AI systems: August 2, 2026

- It is applicable to any company with EU users, irrespective of your company’s HQ location

- Stricter rules on data, human oversight, risk, technical documentation, and conformity

- Four categories of risk: Minimal Risk, Limited Risk, High Risk, and Prohibited

- 7% of global turnover or fines up to €35 million in case of most serious breaches

- The EU AI Office oversees this regulation at the European level

- This is not a one-time audit; continuous monitoring is a requirement after the AI system is placed on the market.

What is the EU AI Act? A Quick Overview for Software Companies

Think of the EU AI Act as GDPR for artificial intelligence. It doesn’t ban AI; it classifies and regulates it based on risk. The higher the potential harm to people, the stricter the rules.

For software development companies, this matters because AI is embedded into almost everything now: recommendation engines, automated hiring tools, customer-facing chatbots, fraud detection, and medical diagnostic assistance. Each of these falls somewhere on the Act’s risk spectrum.

Key Objectives of the EU AI Act

The Act has three things it’s trying to accomplish.

- First, protect people’s fundamental rights from AI systems that could discriminate, manipulate, or cause harm.

- Second, build trust in AI by requiring transparency; users should know when they’re interacting with an AI system.

- Third, create a common legal standard across all EU member states so companies don’t have to navigate 27 different national laws.

Timeline and Enforcement Milestones

Here’s how the rollout looks:

One note worth mentioning is that the European Commission’s Digital Omnibus proposal from November 2025 may extend some of the high-risk obligations under Annex III until December 2027; however, this is not certain. Experts advise that the actual date to focus on is August 2026 and that there is little to no guarantee of the extension being finalized.

Penalties for Non-Compliance

The fines are serious. Violations, including:

- The use of prohibited AI systems can result in fines of up to €35 million or 7% of global annual turnover, whichever is higher.

- High-risk AI violations carry fines up to €15 million or 3% of global turnover.

- Providing inaccurate information to authorities? That’s up to €7.5 million or 1%.

For context, these numbers are on par with GDPR fines. Regulators clearly mean business.

Business Impact: Why Early Compliance is a Competitive Advantage

Here’s something that gets overlooked: compliance isn’t just about avoiding fines:

- Companies that get their AI governance right early will find EU market doors open faster.

- Enterprise clients in banking, healthcare, and government contracting are already asking for compliance documentation before signing deals, whether you’re building fintech AI solutions or expanding into any other regulated industry.

- Early movers get to shape their processes deliberately.

- Late movers will be retrofitting, which costs more and creates more risk.

Understanding the EU AI Act Risk-Based Classification Model

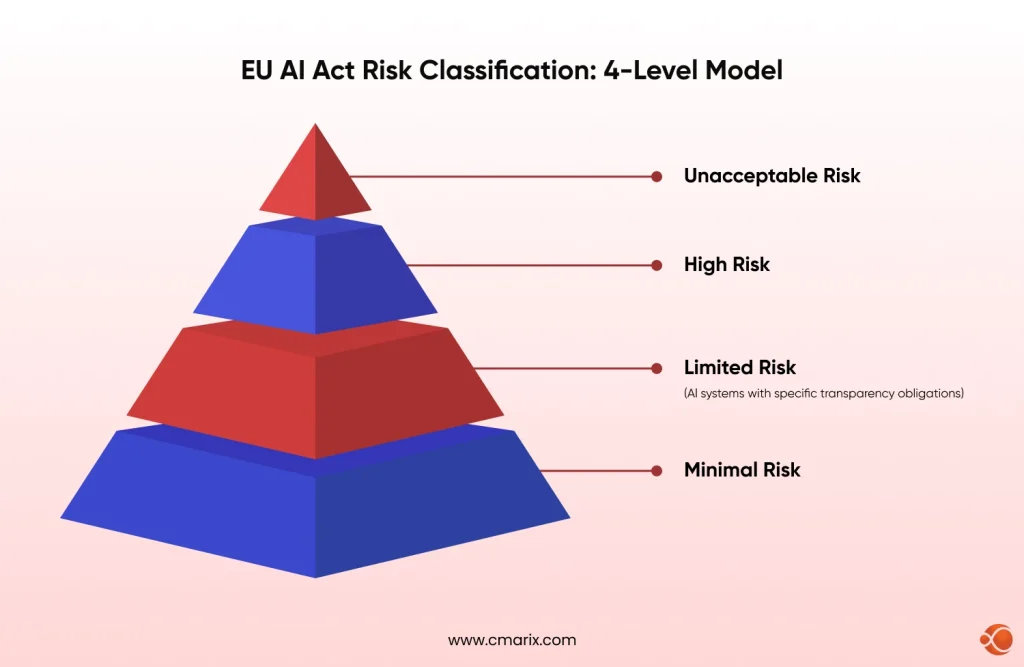

The Act sorts all AI systems into four risk tiers. Where your product lands determines everything: what you need to document, what controls you need, and when enforcement hits.

This classification approach aligns with global frameworks; the OECD AI Principles and UNESCO’s Recommendation on the Ethics of AI both recognize similar risk-based thinking when governing AI systems.

Prohibited AI Systems

These are banned outright. The Act prohibits AI that manipulates people through subliminal techniques, exploits vulnerabilities, allows social scoring by governments, and, with very limited exceptions, uses real-time biometric identification in public spaces. If your system does any of this, there’s no compliance path. It needs to stop.

High-Risk AI Systems

This is where most software development companies need to pay close attention. High-risk AI system classification under the Act covers systems used in employment (automated CV screening, performance assessment), credit decisions, educational access, and critical infrastructure.

These face the strictest requirements: risk management systems, technical documentation, human oversight mechanisms, data governance, conformity assessments, and CE marking before market entry.

If you’re building tools that touch AI-driven healthcare services, HR automation, or financial decision-making, you’re almost certainly in this tier.

Limited and Minimal Risk Systems

Limited-risk systems mostly need to tell users they’re interacting with AI. Chatbots need to disclose they’re not human. Deepfake content needs to be labeled. That’s the main burden here.

Minimal-risk systems, most consumer AI apps, AI-assisted writing tools, and game AI don’t face mandatory requirements, though voluntary compliance is encouraged.

| Risk Tier | Examples | Requirements |

| Unacceptable (Prohibited) | Social scoring, real-time biometric surveillance in public, subliminal manipulation | Completely banned |

| High Risk | Hiring algorithms, credit decisions, medical diagnostics, and law enforcement tools | Strict documentation, oversight, conformity assessment |

| Limited Risk | Chatbots, deepfakes, and emotion recognition | Transparency obligations (users must know they’re interacting with AI) |

| Minimal Risk | Spam filters, AI in games | Voluntary compliance — no mandatory requirements |

CMARIX's AI consultants can help you map your systems, run a gap analysis, and figure out exactly where you stand before August 2026.

Get Expert AdviceWho Needs to Comply? Scope for Software Development Companies

AI Providers vs Deployers vs Importers

The Act separates responsibilities based on your role in the AI supply chain:

- Providers develop the AI system and place it on the market. They carry the heaviest compliance burden: technical documentation, conformity assessment, CE marking, and post-market monitoring.

- Deployers use an AI system in their own operations. They’re responsible for implementing it correctly, maintaining logs, and ensuring human oversight where required. If you’re a company using a third-party AI tool in your product, you’re a deployer.

- Importers and distributors bring non-EU AI systems into the EU market. They must verify that the provider has done their compliance homework before putting anything on shelves.

Applicability for Non-EU Companies

This is one of the most common questions from software development firms in India, the US, and other markets: “Does this apply to us?”

Short answer: yes, if your product is used by people in the EU. The Act has explicit extraterritorial reach, similar to GDPR. A company in Bengaluru building an AI-powered recruitment tool used by a German employer is subject to the Act’s provider obligations. The location of your office doesn’t matter; what matters is where the output lands.

Common Use Cases in Software Development

| Use Case | What to Assess | Why It Matters |

| Node.js microservices architecture powering AI-driven APIs | What decisions are those APIs informing — hiring, loans, access to services | If your backend touches high-risk domains, the Act reaches into your stack |

| Generative AI in data science applications | Training data quality and sourcing | Using unverified or biased datasets to train high-risk systems is a compliance risk on its own |

| Generative AI in eCommerce, product recommendations, dynamic pricing, and automated content generation | Whether automated customer profiling has significant commercial consequences | Sits closer to high-risk territory than most teams assume |

| Python in fintech pipelines, where financial data feeds into decision models | What decisions are being made, and how directly the model influences them | Financial decision-making tools are explicitly listed in Annex III high-risk categories |

If you don’t have the internal capacity to manage this, you can always hire a dedicated development team that’s already familiar with compliance-first architecture and can hit the ground running.

Struggling with AI inventory gaps, documentation issues, or biased testing?

Let's TalkCore Compliance Requirements Under the EU AI Act

Risk Management Systems

High-risk AI providers must have a documented risk management system that runs throughout the entire lifecycle of the system, not just at launch. This means finding risks before deployment, monitoring them in production, and updating controls when risks change.

Data Governance & Quality Standards

Article 10 of the Act lays out specific data governance rules. Training data must be relevant, representative, free of errors (as far as reasonably possible), and complete for the intended purpose. Any known biases need to be identified and mitigated. This is where synthetic datasets in AI development can play a role, but only when they’re properly validated and documented.

Transparency & Explainability

Algorithmic transparency and explainability aren’t optional for high-risk systems. Users and oversight bodies need to be able to understand at a meaningful level how the system reached its outputs. This doesn’t always mean explainable-by-design AI, but it does mean you need documentation that answers “why did the system do that?“

Human Oversight Requirements

The Act requires that high-risk AI systems be designed so humans can intervene, override, or shut down the system when needed. This is what the industry calls Human-in-the-Loop (HITL) oversight. Systems should display outputs in a way that allows a human reviewer to act before consequences become irreversible. Developing on-device AI processing can help in keeping decision loops closer to human review than fully automated cloud pipelines.

Accuracy, Robustness & Cybersecurity

High-risk AI systems must perform effectively and consistently, must handle errors gracefully, and must be protected from adversarial attacks. If your model behaves unpredictably when it hits data it wasn’t trained on, that’s both a product problem and a compliance problem. This is also where AI security and compliance testing become a line item in the development budget, not an afterthought.

CMARIX Compliance Checklist: Step-by-Step Readiness Framework

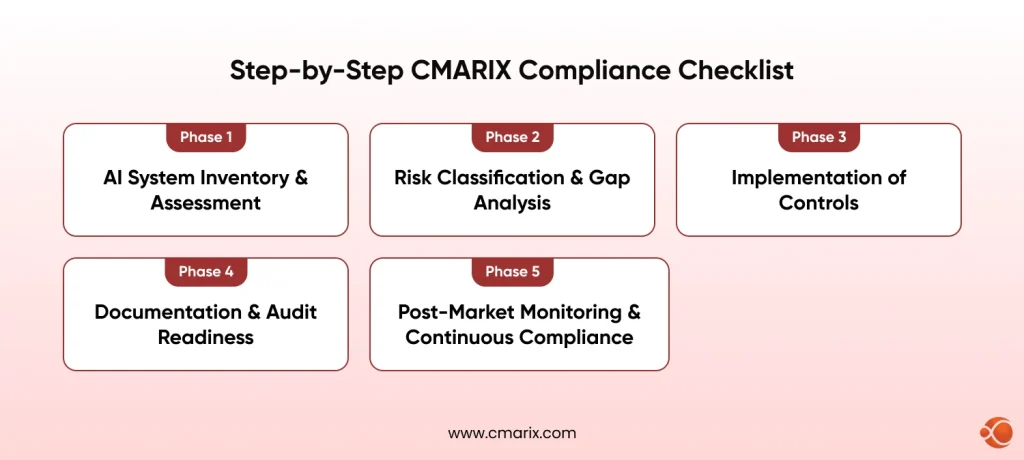

This EU AI Act compliance checklist is structured as a five-phase process, and the same approach CMARIX uses when helping software companies prepare for regulatory readiness.

Phase 1: AI System Inventory & Assessment

Before you can comply with anything, you need to know what you’re working with.

- Map every AI use case across your product portfolio: automated decisions, content generation, recommendation engines, classification models, all of it.

- Define ownership: who develops it, who deploys it, who’s responsible for its outputs.

- Document the purpose: what decision or action does this system influence?

- Assess user impact: could the system’s output affect someone’s rights, opportunities, or safety?

Most companies find they have more AI in their stack than they thought. Customer support bots, churn prediction models, and internal scheduling tools all count.

Phase 2: Risk Classification & Gap Analysis

Once you know what you have, classify each system against the Act’s four-tier model.

- Match every AI use case to a risk tier using the Annex III categories for high-risk systems.

- Finding which systems face the August 2026 deadline vs. the extended 2027 timeline.

- Run a gap analysis: for each high-risk system, where do you currently fall short on documentation, oversight mechanisms, or data governance?

This phase often reveals that systems were built without the kind of documentation the Act requires. That’s not unusual; most AI development predates this regulation. The gap analysis tells you how much work is ahead.

If you’re building models of AI in retail, such as dynamic pricing, personalization engines, and inventory prediction, some of these models will be in that limited risk zone, but customer profiling using AI with higher business implications probably warrants closer evaluation.

Phase 3: Implementation of Controls

This is the hands-on engineering stage.

- Human oversight mechanisms: build override controls, review queues, review queues and confidence thresholds that trigger human review before high-stakes decisions are finalized.

- Logging and traceability: every significant output from a high-risk system should be logged with enough context to reconstruct why the system behaved as it did.

- Bias testing and validation: Conduct structured bias audits for protected characteristics prior to deployment. Document the results. Hire QA experts for AI Compliance testing if your company does not have this capability in-house yet.

- Incident response workflows: what happens when a system produces a harmful or unexpected output? Define that. Who gets notified? What gets logged? How rapidly does remediation happen?

Phase 4: Documentation & Audit Readiness

The EU AI Act is explicit about what needs to be written down. AI technical documentation standards under the Act require providers to maintain records covering: the system’s purpose and intended use, the training data sources and preprocessing steps, the model architecture and performance metrics, risk assessments and their outcomes, and human oversight mechanisms in place.

This documentation needs to be current and accessible. If an authority asks for it, you have to produce it quickly.

Other documentation requirements:

- Conformity assessment records: evidence that your system meets the Act’s requirements before going to market.

- CE marking documentation: for applicable high-risk systems, this is required before EU market entry.

- Transparency disclosures: user-facing documentation explaining that they’re interacting with an AI system and what it does.

If you haven’t already, product auditing services can help surface documentation gaps before regulators do.

Phase 5: Post-Market Monitoring & Continuous Compliance

Compliance isn’t a one-time event. Post-market monitoring obligations under the Act require ongoing attention even after you’ve passed the initial conformity assessment.

- Continuous monitoring: track your system’s real-world performance, accuracy, fairness metrics, and error rates against the documentation at launch.

- Incident reporting: For serious incidents or near misses, including those involving high-risk systems, the Act requires notification to the authorities.

- Periodic Compliance Reviews: As the system changes, re-run the risk assessment and update the documentation as appropriate.

- Regulatory Updates: The Act will continue to change through guidelines, harmonized standards, and delegated acts. Someone in your organization will need to own this process.

Whether you’re building compliance into a SaaS AI MCP development pipeline or retrofitting governance into an existing product, continuous monitoring is what keeps you on the right side of enforcement long after launch.

Common Compliance Challenges (and How to Solve Them)

Lack of AI Inventory

More than half of organizations don’t have a complete picture of the AI systems running in their products and operations. The fix is a structured audit, not a quick review through the product roadmap, but a systematic review that includes third-party APIs, vendor tools, and anything embedded in data pipelines.

Poor Documentation Practices

Most development teams document for internal use, enough for the next developer to understand the codebase, not enough for a regulator to assess compliance. The gap is significant. Start retrofitting documentation now, and build compliance documentation into your development workflow going forward.

Bias and Data Quality Issues

Training data problems don’t always surface until you look for them. Build bias testing into your QA process and treat it as a first-class engineering concern, not a post-launch review. Working with information technology consulting services that specialize in responsible AI can accelerate this.

Integration with Existing Systems

Retrofitting oversight mechanisms into existing systems is harder than developing them from the start. If you’re adding human review checkpoints to a fully automated pipeline, expect to rework API response handling, UI flows, and notification systems. Investing in secure AI software development services from the start is almost always cheaper than retrofitting later. Factor this into your timeline.

Best Tools and Frameworks for Faster EU AI Act Compliance

| Framework / Tool | What It Covers | How It Helps |

| ISO/IEC 42001 | AI management systems | Aligns closely with EU AI Act requirements — risk management, documentation, and continuous improvement. Already certified? The compliance gap is much smaller. |

| ISO 31000 | General risk management | Useful for cross-referencing EU mandates with international risk management best practices across multiple jurisdictions |

| IBM OpenScale, Microsoft Responsible AI Dashboard, Fairlearn, AI Fairness 360 | AI governance platforms | Automate bias detection, model monitoring, and explainability reporting |

| EU AI Office | Supervises general-purpose AI models | Go-to source for the latest guidance, codes of practice, and implementation timeline updates |

The role of AI in digital transformation has reached a point where governance tooling is as important as model performance tooling. Budget for both.

Final Thoughts: Building Future-Ready, Compliant AI Systems

The EU AI Act isn’t going away, and the August 2026 deadline is close. For software development companies, the path forward is actually pretty clear:

- Inventory your AI systems

- Classify them honestly

- Fill the documentation gaps

- Build the oversight mechanisms

- Keep monitoring after live

What’s harder is organizational. Someone needs to own this. Compliance can’t live exclusively in legal, in engineering, or in product; it has to span all three. Companies that build an internal AI governance function now will find this whole process much more manageable than those trying to coordinate across siloed teams under deadline pressure.

CMARIX works with software development companies across industries to make this tractable. Whether you need AI compliance consultants to run the initial assessment, an AI PoC service to test a compliant architecture before committing to a full build, or a dedicated development team that builds compliance from day one, the support structure exists.

The question is how seriously you take the August 2026 deadline. Start the inventory now. The rest follows from there.

FAQs on EU AI Act Compliance Checklist

What are the penalties for non-compliance with the EU AI Act in 2026?

Fines may change by violation type. Prohibited AI system violations can reach €35 million or 7% of global annual turnover. While high-risk system violations carry fines of up to €15 million or 3% of turnover. Giving incorrect information to authorities can result in fines up to €7.5 million or 1% of turnover. Penalties are calibrated for company size.

How do I know if my software is a “High-Risk AI System” under the EU AI Act?

Check whether your system falls into Annex III categories: critical infrastructure management, biometric identification, education access, employment decisions, administration of justice, or democratic processes. If your system makes or meaningfully influences decisions in any of these domains, it’s almost certainly high-risk. When in doubt, get a professional classification assessment.

Does the EU AI Act apply to software companies based outside of Europe?

Yes. Like GDPR, the Act has extraterritorial reach. If your AI system is used in the EU, the Act applies to you regardless of where your company is incorporated. Non-EU providers placing high-risk systems on the EU market must designate an EU representative.

What is a Quality Management System (QMS) for AI development?

Quality Management System for AI is a documented set of processes and controls that govern how you build, test, validate, and maintain AI systems. Under the EU AI Act, providers of high-risk systems are required to implement a QMS that includes data governance, risk management, testing procedures, post-market monitoring, and documentation practices. ISO/IEC 42001 provides a recognized framework for building one.

Are open-source AI models exempt from the EU AI Act in 2026?

Partially. Open-source GPAI models with weights made publicly available are generally exempt from certain provider obligations, but not all of them. If an open-source model poses systemic risk (typically defined by training compute thresholds), it still faces transparency and risk mitigation requirements. And if a company fine-tunes or deploys an open-source model in a high-risk application, the deployer takes on provider-level responsibilities for that deployment.

What is “Human-in-the-Loop” (HITL) oversight in AI compliance?

HITL means designing an AI system that allows humans to review, intervene, or override AI decisions. This has already been made a requirement by the EU AI Act for high-risk systems. In practice, this means developing review queues, confidence levels that trigger a human review, override functionality in the UI, and audit trails that record what a human reviewed and what they decided. While it might seem like a compliance exercise, well-implemented HITL can make an AI product more reliable and trustworthy.