Quick Summary: In 2026, the state of AI voice moves beyond the experimental into the realm of enterprise infrastructure. With several firms adopting AI voice assistants to improve customer experiences, localization, and automation, it is crucial to be aware of the services available today. This article provides a comparison of the best AI voice generators of 2026, such as Elevenlabs, WellSaid Labs, Murf AI, Synthflow, and OpenAI Whisper, based on criteria such as pricing and use cases.

AI voice generators have been around for only a relatively short time. The difference between then and now is the degree to which such voices can be made believable, scalable, and commercially viable. Previous systems yielded robotic speech that was easily recognizable. Modern systems can reproduce emotional prosody and nuance.

Companies adopting Gen AI could achieve 15.2% cost savings. It is no longer an issue for decision-makers to consider adopting voice AI; the question now is which platform to use, depending on specific capabilities.

Today, we compare the leading AI voice generators of 2026 – ElevenLabs, Murf AI, WellSaid Labs, Synthflow, OpenAI TTS, and others. Whether your team is building custom AI software development for voice applications or evaluating vendor platforms, this comparison gives you the signal through the noise.

What’s Actually Happening in the AI Voice Market in 2026?

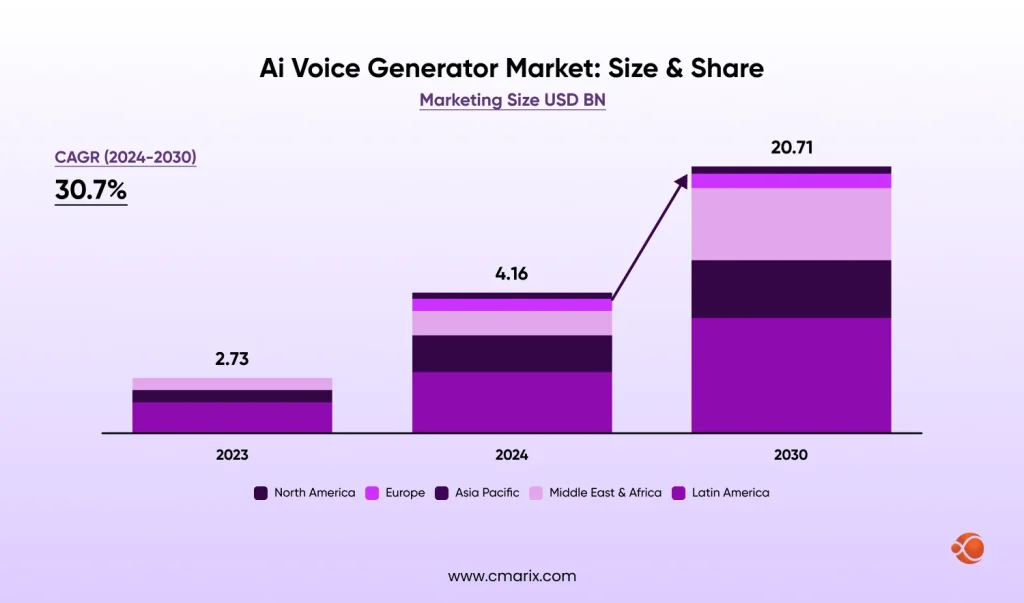

Enterprises are no longer piloting voice AI in sandbox environments. It is being deployed at a scale across customer service, compliance training, internal communications, and even multilingual content pipelines. The study by MarketsandMarkers research shows that the AI voice market generator is projected to grow to a USD 20.71 billion industry by 2031, which was valued at USD 4.16 billion by the end of 2025.

The adoption signal is just as important as the market size:

- 80% of businesses plan to integrate AI-driven voice technology into customer service by 2026

- 67% of Fortune 500 companies are now running production voice AI systems

- Production voice agent implementations grew 340% year over year across 500+ organizations.

- Gartner anticipates conversational AI will cut down contact center agent labor costs by $80 billion in 2026.

- Recent breakthroughs like Microsoft’s VALL-E X research on zero-shot cross-lingual synthesis show how AI voice systems can generate high-quality speech across languages from minimal input while preserving the speaker’s voice and emotion.

The business argument is equally compelling. The cost of artificial intelligence voice is about $0.40 per call, whereas that of human agents is between $7 and $12 per call. Therefore, artificial intelligence reduces the cost by 90%-95% per call. According to a Forrester Consulting study, organizations leveraging artificial intelligence for voice enjoy a return on investment of 331%-391% within 6 months of deployment.

Investing in the AI voice market is no longer a future investment; the more you delay deploying voice AI in your business, the more you fall behind competitors who are seeing such amazing returns.

What Does “Enterprise-Ready” Actually Mean for a Voice AI Platform?

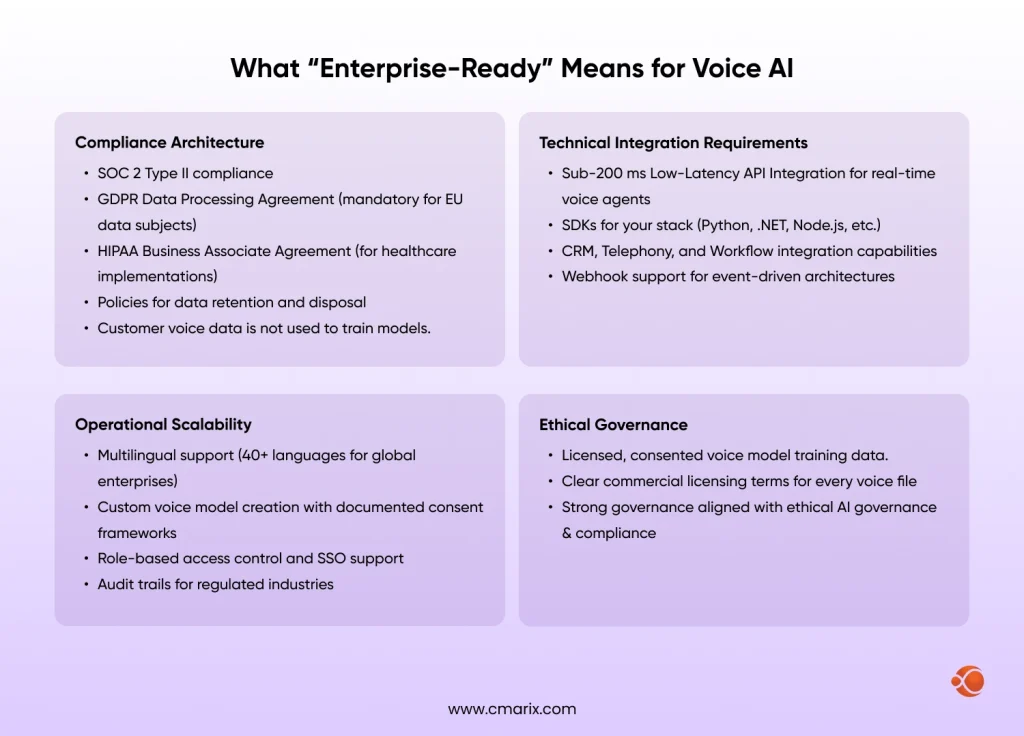

It doesn’t take much for AI voice platforms to claim to be “enterprise-ready”, and you probably don’t have a clear understanding of what to look for before comparing the best voice AI platforms in the market. Here are the basic requirements that any enterprise AI voice platform tool should be able to deliver on:

Understanding the differences between LLM and NLP also helps clarify which technical requirements actually matter for your use case, especially when vendors use these terms interchangeably in their marketing.

Platform-by-Platform Breakdown: Which AI Voice Generator Is Right for You?

If you’re comparing multiple platforms quickly, this table gives you a side-by-side snapshot before diving deeper into the list of top AI Voice Generators in 2026 for enterprise use cases.

| Feature | ElevenLabs | WellSaid Labs | Murf AI | Synthflow | OpenAI Whisper |

| Primary Use Case | Content creation, dubbing, developer APIs | Regulated enterprise narration | Business voiceover, L&D | Real-time voice agents | Speech recognition pipeline |

| Voice Quality | Very High | High | High | High | Not applicable (ASR, not TTS) |

| Languages Supported | 30+ | 10+ | 20+ | 20+ | 57 |

| Real-Time API | Yes | Yes | Limited | Native | Yes |

| Voice Cloning | Yes (instant + professional) | Enterprise only | With consent | Yes | No |

| Emotion Control | Advanced (tag-based) | Word-level cues | Recording-matched | Adaptive | No |

| SOC 2 Certified | No (review DPA carefully) | Type I & II | Type II | Yes | Yes (via OpenAI) |

| GDPR Compliant | Partial (review DPA) | Yes | Yes | Yes | Yes |

| HIPAA Ready | No | Yes | No | No | Via Azure/GCP |

| Free Tier | Yes (no commercial rights) | No | Yes (no downloads) | Limited | Yes (self-hosted) |

| Starting Price | $5/month | $50/user/month | $19/month | Custom | Usage-based |

| Best For | Developers, creatives, media | BFSI, healthcare, legal | Marketing, L&D, HR | Contact centers, sales | Developer pipelines |

ElevenLabs: Best for Voice Quality and Creative Flexibility

If you have been actively searching for voice AI generators, then you should be aware of this particular firm. This firm has been actively promoting itself as the top choice for integrating voice AI into services. Furthermore, it is regarded as the most technologically advanced voice generator platform in 2026.

The output most closely approximates human language in terms of emotional depth, pacing, and naturalness. When voice quality is the main consideration, and the use setting is not governed by industry regulations, Elevelnabs should be an obvious selection.

What does Elevenabs do well:

- Professional Voice Cloning from 30+ minutes of audio (hyper-realistic results)

- Instant Voice Cloning from 1–5 minute audio samples

- AI dubbing across 30+ languages, preserving intonation and delivery style.

- Speech-to-Speech (S2S) synthesis for real-time applications

- Conversational AI agent API for live deployments

Pricing

| Plan | Monthly Cost | Best For |

| Free | $0 | Testing only — no commercial license |

| Starter | $5/month | Solo creators, light commercial use |

| Creator | $22/month | Content teams, voiceover workflows |

| Pro | $99/month | High-volume creators |

| Scale | $330/month | Teams, contact center trials |

| Business | $1,320/month | Multi-team enterprise deployments |

| Enterprise | Custom | Volume commitments, SLA-backed deployments |

What should you watch out for:

ElevenLabs Text-to-Speech API integration operates under a credit-based system. The premium voice models will use twice as many credits as the normal voice models; this may be hard to quantify from the price sheet. For continuous, high-volume use of the service, plan your cost of ownership before deciding to buy into it.

Compliance Posture:

ElevenLabs is flexible with the data license. Enterprise users should note that, for HIPAA, FINRA, and other highly regulated industries, you may need to review their voice data retention and model usage clauses in detail. It’s not advisable for these types of use cases.

WellSaid Labs: Best for Compliance-First Enterprise Environments

WellSaid Labs solves the exact problem ElevenLabs can’t: legal IT and defensible usage. They probably are not the most feature-packed AI voice platforms, but as a TTS platform, it primarily targets professional content creators and corporate training teams who need realistic AI voiceovers.

What WellSaid Labs does well:

- Fine-tuned pronunciation control of words using the Cues Panel – important for regulatory compliance

- Proprietary and licensed voice models (never trained on customers’ data)

- U.S.-based infrastructure through Google Cloud Platform

- Compliance with SOC 2 Type 1 & 2, GDPR, HIPAA, and FINRA regulations

- Enterprise single sign-on support and role-based access management

- Commercial use of each and every voice file

The main customers of WellSaid Labs are organizations that require governance, SOC 2, and accurate pronunciation. The minimum price for software use will be $40 per person per month. However, there is no freemium pricing model available; hence, it may be challenging to evaluate its functionality.

Pricing:

| Plan | Monthly Cost |

| Free | None (7-day trial, no downloads) |

| Creative | $50/user/month |

| Enterprise | Custom |

Compliance Posture:

Among the best available in the marketplace. WellSaid is SOC 2- and GDPR-compliant out of the box. WellSaid uses closed-model AI to protect your content, features two-level content moderation, and all voice files have full commercial rights to use.

Murf AI: Best for Non-Technical Business Teams

Murf AI is the perfect enterprise AI Agent for a voice generation solution for non-technical business professionals who want quick success. With a drag-and-drop editor, non-technical professionals can create high-quality voice recordings on their own without writing code or using an API.

What Murf AI does well:

- Provides access to 120+ AI voices across 20+ languages

- Direct Canva and PowerPoint integrations (huge for marketing and L&D teams)

- Automatic dubbing with lip sync capabilities

- Ability to modify emotion and expression through intonation matching recordings

- SOC 2 Type II, ISO 27001, and GDPR compliant

- Voices are ethically sourced and have royalty deals.

Pricing:

| Plan | Monthly Cost |

| Free | $0 (10 minutes, no downloads) |

| Creator | $19/month |

| Business | Custom |

| Enterprise | Custom |

Compliance Posture:

Solid performance for its market category. The voice technology provided by Murf AI is SOC 2 Type II, ISO 27001, and GDPR-certified, and all voice samples used have been legally obtained in accordance with an ethical framework to safeguard against copyright infringement. A strict business data segregation policy is in place for all enterprises.

Synthflow Voice AI Agents: Best for Real-Time Conversational Deployments

Although Synthflow Voice AI agents are one of the applications mentioned in this blog post, the software was developed for a specific purpose. In addition to the above, Synthflow stands out because it was designed differently from other applications, as it was not intended to generate content using the voice.

What Synthflow Voice Agents does well:

- Latency is less than a second for live agents’ processes.

- Compatibility with existing customer relationship management software such as Salesforce, HubSpot, Zoho, and GoHighLevel

- Detection of emotional tone and dynamic responses

- Multi-agent orchestration capabilities for large outbound campaign execution

- Webhooks/ APIs capabilities for custom process integration

Best for: Enterprise sales automation, patient appointment scheduling, customer re-engagement campaigns, and contact center overflow. If your use case is a real-time conversation rather than pre-produced content, Synthflow deserves serious consideration.

For enterprises building these workflows on top of existing infrastructure, the seamless enterprise system integration layer is often where implementation succeeds or fails. The platform choice is secondary to the integration architecture.

OpenAI Whisper API: Best for Speech Recognition Within Custom Pipelines

The OpenAI Whisper API is not a voice-generation tool but a speech recognition API for companies developing two-way voice tools. The Whisper API performs voice-to-text recognition within a voice pipeline and delivers outstanding precision across over 57 languages. It operates effectively in environments with a lot of noise, including accents. It is often used in combination with a TTS base (ElevenLabs, OpenAI TTS, Azure Speech).

Where OpenAI adds most value:

- Sentiment analysis on customer calls

- Voice-enabled enterprise applications with custom business logic

- Multilingual transcription for compliance documentation

- Input layer for custom AI software development for voice applications

It is possible to integrate Whisper with other platforms by building a full pipeline that requires knowledge of integrating the Python API. If your company is considering a full-stack voice solution, Whisper offers a better API.

Platform Capabilities: What Actually Differentiates Them

Across platforms, differentiation increasingly comes down to how well they implement:

| Feature | Description |

| Emotional Prosody & Nuance | Delivers natural, expressive, human-like speech with tone and emotion control |

| Zero-Shot Voice Cloning | Replicates voices quickly using minimal audio samples |

| Multimodal Voice Synthesis | Combines speech with contextual, visual, or behavioral signals |

| Low-Latency API Integration | Enables real-time conversational responses with minimal delay |

| Edge-Based Voice Processing | Processes voice locally for faster inference and improved |

What’s the Real Cost Difference Between API-Based and Seat-Based Pricing?

This question matters more than most enterprises realize when selecting vendors.

API-Based Pricing (ElevenLabs, OpenAI, Fish Audio)

- Your usage is billed based on characters, minutes, or API calls.

- Billing becomes unpredictable at higher usage levels.

- Well-suited for content creation and development teams, where there is moderate usage volume.

- Vulnerability to the premium pricing model, concurrent agent restrictions

Seat-Based Pricing (WellSaid Labs, Murf AI)

- You pay monthly by the named user.

- Costs are predictable but inefficient in an automated process that lacks human intervention to kick-start generation.

- Ideal for named users producing content manually.

Enterprise Custom Contracts (all platforms)

- Volume commitments unlock 20–30% discounts.

- Provide the contractual structure procurement teams require

- Always the correct model for large-scale or automated deployments

The right model for your organization depends on one question: Is a human initiating each voice generation, or is your system generating voice output automatically in response to events? If it’s the latter, seat-based pricing is the wrong model regardless of what the platform’s marketing says.

Teams building end-to-end enterprise app development workflows with embedded voice generation should always engage app developers at the enterprise contract level from the start, not migrate up from self-serve tiers after you’re already in production.

Expert Tip: Planning to add AI to your app, but don’t know how to? Follow our step-by-step AI app integration guide for best practices and detailed insights.

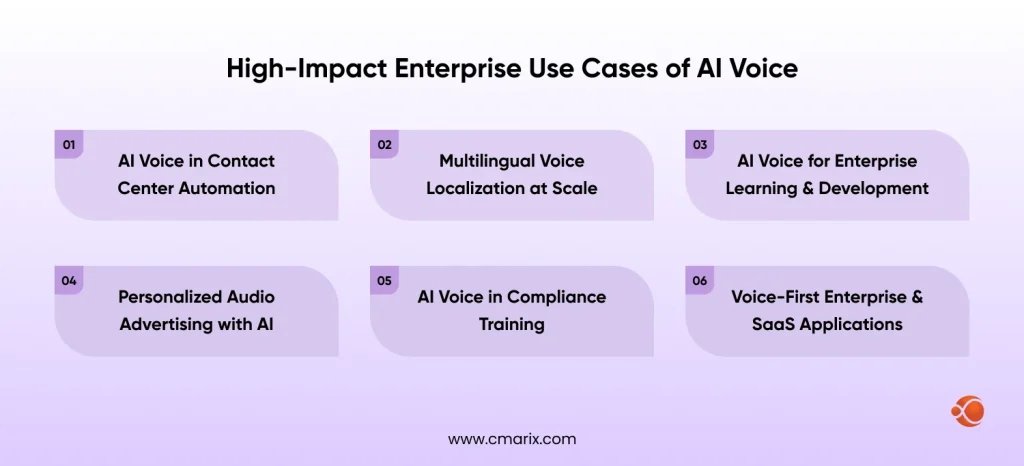

Where Do Enterprises Actually Deploy AI Voice? 6 High-Value Use Cases

1. Contact Center Modernization

The IVR legacy systems are dependent on a static audio tree model. They are frustrating for customers and negatively affect first-contact resolution. They will always miss changes to a product or policy.

The AI voice system is a replacement for the static IVR systems. AI voice-based platforms are known for their first-contract resolution rates of 55% to 70%, sometimes reaching 80%. It shows that most phone calls can be managed without any human input at a cheaper cost.

2. Multilingual Content Localization

Voice AI can be used by global enterprises that need to produce training, compliance, or customer-facing content across different markets speaking different languages. The manual approach to this challenge is to have human narrators for every language, scheduled in studios, with every content update needing a full re-recording.

With an AI voice, there is no such limitation. By offering 30-57 languages to enterprises, the top voice providers enable businesses to create localized content as quickly as they can create base-language content.

3. Enterprise Learning and Development

At present, among the most innovative ways to create learning content in L&D is the use of AI Voice Bots. In terms of enablement, Microsoft has leveraged WellSaid’s strengths to make the task easier and risk-free.

4. AI-Powered Advertising and Personalized Audio

Using AI voice tech, marketers can create unique audio ads tailored to their target demographics in ways obviously unattainable with human-sounding voices.

This is the most advanced sector in AI-based admakers, enabling companies to run A/B tests on voice tone, pace, and persona.

5. Compliance Training in Regulated Industries

The Banking, Financial Services & Insurance (BFSI), Health Care, and Legal industries are using Human-Sounding Artificial Intelligence (AI)- Generated Speech to provide consistency and audible delivery of content, particularly for highly compliance-sensitive content. AI-generated voices produce consistent results with each delivery, while Human Voices may differ each time they are delivered.

6. Voice-First SaaS and Enterprise Applications

APIs for voice artificial intelligence are emerging as an indispensable component in building voice-first SaaS platforms. Their adoption is rising sharply as enterprise software starts integrating live voice agents, voice-driven navigation, and accessibility capabilities into their offerings.

Are AI-Generated Voices Legally Compliant for Commercial Use?

Yes, however, under very specific legal and regulatory conditions. AI-based voices can be used legally in business environments, provided companies adhere to certain consent and data protection rules in the jurisdictions in which they operate.

Details related to voice are covered by biometrics. Moreover, the laws regulating voice details will be stricter from now until 2026 than they were in earlier years. You should adhere to these laws prior to making any decisions.

| Region | Key Regulations | What It Means for AI Voice |

| United States | Federal Communications Commission (FCC), Telephone Consumer Protection Act (TCPA) | Prior written consent required for AI-generated voice in automated calls |

| United States | Health Insurance Portability and Accountability Act (HIPAA) | Applies to voice data with PHI; penalties from $100 per incident up to $1.5M annually |

| United States | Federal Trade Commission (FTC) | Evolving rules for disclosure in AI-generated ads and communications |

| European Union | General Data Protection Regulation (GDPR) | Voice treated as biometric data; requires explicit consent and strict controls |

| European Union | GDPR DPAs | Mandatory Data Processing Agreements with all vendors handling EU data |

| European Union | GDPR Penalties | Fines up to €20M or 4% of global annual revenue |

| Global Considerations | Data Residency | Define where voice data is stored and processed |

| Global Considerations | Subprocessors | Full transparency across cloud, model, and integration providers |

| Global Considerations | Cross-Border Transfers | Compliance with international data transfer laws |

| Global Considerations | Industry Standards | FINRA (finance), ISO 27001 (security), other sector-specific frameworks |

What to verify with any vendor:

- SOC 2 Type II certification (auditable security practices)

- Signed GDPR Data Processing Agreement before any EU data is processed

- HIPAA Business Associate Agreement for healthcare PHI

- Data retention and deletion SLAs in writing

- Explicit subprocessor list (cloud providers, model providers, integration partners in the vendor’s stack)

- Commercial licensing documentation for every voice model you use

Voice AI service providers without certifications such as SOC 2 and ISO cannot guarantee they will keep your information secure or handle a compliance audit effectively. Compliance checks should not be done only as a last step; they should be part of the evaluation process itself.

The compliance architecture for projects that use voice AI in enterprise software development at CMARIX should be verified during the integration design stage.

Trust CMARIX to handle the tech and the legal aspects.

Contact UsHow Does Emotion Detection Work in Modern AI Voice Systems?

This question is frequently asked by companies evaluating voice-based chatbot technology. Here’s the truth about how it works.

Modern AI voice systems detect emotion through three-layered mechanisms:

1. Acoustic Feature Analysis

This technology uses the speaker’s voice, including pitch variations, speaking rate, intensity, and spectrum. A high-pitched, intense tone of voice indicates either excitement or stress. The low-pitched voice speaks slowly because of boredom or tiredness.

2. LLM Contextual Inference

The context of the caller’s speech includes aspects that cannot be inferred solely from the caller’s voice. Although the caller remains calm when handling billing issues, he expresses his irritation with the language he uses.

3. Multimodal Signal Fusion

In the fusion of linguistic and acoustic signals, emotion is correctly recognized without being influenced by languages and sarcasm, except for noise.

In deployment, integrating emotional intelligence enables voice systems to detect real-time frustration, urgency, and satisfaction, reducing agent escalations by 25%. Systems that accurately detect caller frustration and escalate before the interaction deteriorates are delivering measurable improvements in CSAT scores.

Building this type of system in-house requires significant ML expertise. Teams leveraging enterprise .NET development for voice bots or Python-based voice architectures through CMARIX can significantly reduce implementation risk and accelerate time-to-deployment.

Build vs. Buy: When Does Custom Voice AI Development Make Sense?

For some organizations, it could be the most efficient and easy way to plug into a best-in-class solution through an API. In some cases, results generated by your own best-of-breed algorithm for voice synthesis, speech recognition, and intent recognition may not be achievable by simply utilizing an available API.

Enterprise voice systems rely heavily on high-quality training data. Open initiatives, such as datasets from the Mozilla Data Collective, provide multilingual speech data that powers modern voice models, especially for low-resource languages and custom AI pipelines.

When to Consider Custom Development

- Your brand requires a proprietary voice identity: a specialized voice model that is exclusively created for your brand’s vocal identity without sharing any underlying structure or risking your voice identity to be mimicked by anyone else.

- Your domain has specialized vocabulary: The pharmaceutical industry, lawyers, and manufacturers all use specific language that mainstream models cannot pronounce accurately. Training a model using domain-specific data will fix this problem once and for all.

- Regulations enforce on-premises processing: Some sectors have industry regulations that stipulate on-site voice data processing. In the case of voice inference, on-site computing is the sole way to achieve the results.

- Your workflow requires deep system integration: whenever voice AI needs to interact with proprietary systems or access live data streams, the API-only solution becomes cumbersome.

CMARIX is well-versed in creating easy-to-understand and effective voice user interfaces, as well as designing intuitive voice user interfaces and voice-powered enterprise generative AI solutions that leverage the best third-party APIs, with a touch of customization, giving you the best of both worlds.

Decision Framework: Choosing the Right AI Voice Platform

If you’ve read this far, you’re serious about making the right call. Here’s the simplest decision framework:

| Your Primary Need | Best Starting Point |

| Best voice quality, maximum creative control | ElevenLabs |

| Compliance-first, regulated enterprise environment | WellSaid Labs |

| Fastest time-to-value for non-technical teams | Murf AI |

| Real-time conversational voice agent deployment | Synthflow |

| Custom speech recognition pipeline | OpenAI Whisper |

| Custom voice model with proprietary brand identity | Custom development |

The common thread between the best implementations of 2026 is not the platform chosen; it is the quality of the integration architecture, the structure of the compliance process, and the elegance of the user experience design around the voice feature.

The platform is the ingredient. How you integrate it is the recipe.

How CMARIX Approaches Enterprise Voice AI

At CMARIX, we treat voice AI as a systems design challenge, not a software procurement exercise.

The platforms in this guide offer great capabilities. However, their effectiveness can be realized only when these platforms are integrated into the enterprise architecture through appropriate data flows, fallback mechanisms, compliance mechanisms, and monitoring. Well-selected but poorly integrated platforms perform poorly, while decently selected but well-integrated platforms will beat their competition all day long.

In working with voice platforms, our approach includes expert AI developers who are experienced in integrating enterprise solutions, UI/UX design for voice-first experiences, and building compliance architecture under GDPR, HIPAA, and SOC 2 standards.

Whether you’re:

- Evaluating platforms and need a vendor-neutral architecture review

- Building scalable custom software frameworks for voice AI integration

- Piloting a voice agent for a specific use case

- Scaling a proven deployment to new markets or business units

- Building a proprietary voice capability from the ground up

Reach out to our AI development team, and we’ll help you move faster and avoid the expensive mistakes that come with getting the integration architecture wrong the first time.

The Bottom Line: Which AI Voice Generator Is Right for Your Enterprise?

There is no single correct answer, which is why the enterprise evaluation process matters more than any feature comparison table.

ElevenLabs stands out for its voice quality and creative potential, but organizations operating in regulated industries need to carefully review their data management practices. WellSaid Labs should be selected in a compliance-oriented setting, and there’s nothing to complain about the prices. The Murf AI platform works great when you have a team of non-technical people who need fast time-to-value. Synthflow is the top choice for real-time conversational agents. For speech recognition, there’s always the OpenAI Whisper.

However, the common denominator behind successful implementations in 2026 is the same as the one in the past – it’s the architecture of integration, compliance frameworks, and UX design. Voice AI systems are now infrastructure, not just a nice-to-have technology. Companies that think this way can expect a full return on investment, with data estimating returns of 331% to 391%.

FAQs for AI Voice Generators

How do enterprises ensure data privacy and security when using AI voice cloning?

Businesses need to treat voice data like other biometric data, beginning with verifying whether vendors have a SOC 2 Type II certification and GDPR Data Processing Agreements in place for their customers’ data before processing even begins. Businesses must refrain from using voice data for model retraining and must clearly outline in writing the time frames for retaining and deleting data as part of the agreement. In regulated fields, it is essential to have a HIPAA Business Associate Agreement in place.

What is the typical ROI for switching to AI voice for customer experience (CX)?

The main driver of ROI for implementing AI voice in customer experience is the disparity in cost-per-call metrics between the two call-handling methods, a difference high enough that almost all businesses can see a return on investment within a few months. In addition, call resolutions on the first contact improve with the appropriate use of CRM and telephony integration in an AI voice system. The key factor in achieving strong returns is the inclusion of architecture as a central pillar of the strategy.

Can 2026 AI voice generators handle real-time code-switching and accents?

Accents have finally matured across most major platforms thanks to the latest improvements in ASR, which enable them to work effectively even in the presence of challenging factors such as noise and different dialects. On the other hand, code-switching refers to a speaker’s ability to switch languages within a single statement, which remains quite complex and varies significantly depending on the languages used and the underlying model.

Pay attention to how these voicebots handle language complexity in real-world scenarios. This is an important factor to consider before selecting a production platform.

Are AI-generated voices legally compliant for commercial use in ads?

Although there may be instances where using an AI voice in commercials is legal, this is subject to conditions, such as where it is being used and the method used to train the model, among others. The US has different disclosure rules from the Federal Trade Commission regarding AI-generated content used in advertisements. However, in the European Union, voice data is considered biometric data under the GDPR, which requires consent. Before launching any marketing campaigns, it is imperative to ensure that the voice models have the required commercial license documentation.

How does emotion detection work in modern AI voice bots?

Modern artificial intelligence voice systems detect emotion based on both acoustic features (pitch, speech rate, and volume) and the ability of large language models to infer the meaning behind a user’s utterance. When combined with multimodal data, these two modalities can provide cross-lingual, cross-accented, and noisy emotion classifications.

The ultimate outcome of this process is an AI voice system that can identify caller frustration before it escalates, enabling timely routing decisions that significantly reduce the number of agent-handled escalations and improve customer satisfaction scores. To build emotionally aware voice bots for customer support, thoughtful system design must occur at the integration layer and during platform selection.

What is the cost difference between API-based and seat-based pricing for AI voice platforms?

At low to moderate rates, API pricing works well, but it becomes challenging to forecast when used at scale. This is because the premium voice models require more credits to generate than do standard voice modules. As a result, the model (seat-based) provides predictability for teams with named users manually generating models (voice), but becomes structurally inefficient for automated (event-driven) or automated output (event-driven).

In the case of enterprise clients deploying enterprise contracts to fulfill their volume commitments/pricing to achieve significant discounting, and to provide a compliance/finance structure for the procurement team to leverage.