Quick Summary: Infrastructure is more important to AI product development in 2026 than code itself. Costs increase with inferences, data pipelines, and MLOps, not just development time and money. An MVP can cost anywhere from $80K to $180K, but a production system can cost anywhere from $200K to $450K+ in no time. In this post, we’ll explore where this money is going so you can plan for it realistically, not optimistically.

Most AI product development cost estimates stop at developer salaries. And to be honest, those numbers look manageable until you start a project, and your first AWS bill arrives.

Building a production-grade AI product in 2026 is not a one-item list expense. It is an overlapping stack of infrastructure decisions, architectural trade-offs, data pipelines investments, and other ongoing operational overheads that compound over time. Also, compared to traditional software, every component of an AI system, ranging from the model to inference compute and annotation pipelines, incurs its own hidden cost multiplier.

If you read McKinsey’s Global AI Report, it highlights that global spending on AI is projected to reach USD 2.52 trillion by the end of 2026, with infrastructure alone accounting for more than USD 401 billion of that total. This itself puts things in perspective and highlights the cumulative pressure companies are already feeling across managing cloud bills, talent acquisition, and compliance readiness.

The gap between “we built an AI demo” and “we run a production AI product” is where most cost overruns live. This guide is intended to decode every layer of that gap, from architecture and infrastructure to MLOps, compliance, and building a dedicated AI development team. The aim is to get you ready for your AI investments by budgeting with a more practical approach rather than optimism-driven, irrational decisions.

Let’s get started.

Why Does AI Product Development Cost More Than Traditional Software in 2026?

Before you go grab your calculator or fill up your Excel sheet, let’s talk about the foundational concepts first. It is important to understand why the cost structure of AI products is fundamentally different from conventional app development.

Traditional software works on deterministic outputs. The process that usually follows is:

You write logic -> You test it -> It works, or it doesn’t work.

AI-driven software is built on probabilistic systems. The model can return a slightly different answer tomorrow than it did today. There is a certain sense of uncertainty that demands an entirely different infrastructure investment. AI products are supposed to have infrastructure that is designed for observability, retraining, monitoring, and rollback at every step of the lifecycle.

The National AI Policy Framework 2026 has been instrumental in accelerating federal permitting for AI data centers. It has allocated between USD 168 million and USD 224 million towards AI infrastructure and deployment support. This shows how seriously the government takes AI infrastructure investment as a national priority capital expenditure.

When public-sector planning is structured at this investment scale, private-sector AI budgets need to be adjusted accordingly.

There are three structural reasons why AI software product development costs more in comparison to traditional software:

You are not just building software; you are managing a data supply chain.

Labeled training data, validation sets, and synthetic augmentation pipelines are some of the ongoing costs that begin before you deploy a single model after training it, and these costs never fully end.

Inference is not free.

Every time your AI product responds to a user query, it consumes a computer. Inference compute scaling costs can exceed initial investments within 18 months.

Compliance is now a baseline expense.

You can’t give your product an edge by saying, “We build compliant software.” There is no other choice; you have to align your product with the relevant compliance requirements for the region you are trading in. Here are some of the prime examples of important AI compliances across different regions:

List of Global AI Compliances

| Region | Framework | Type | Approach | Key Focus | Enforcement |

| 🇪🇺 EU | EU AI Act | Law | Risk-based | Safety, rights, explainability | Very High |

| 🇺🇸 US | NIST AI RMF + State Laws | Mixed | Risk lifecycle | Governance, evaluation, innovation | Medium |

| 🇬🇧 UK | AI Regulatory Framework | Guidance | Principles-based | Fairness, transparency | Low–Med |

| 🇨🇳 China | GenAI + Algorithm Rules | Law | Centralized | Control, security, compliance | Very High |

| 🇮🇳 India | DPDP + AI Advisories | Emerging | Responsible AI | Data protection, ethics | Low |

| 🌏 Global | ISO/IEC 42001 | Standard | Governance | Lifecycle, audits, risk | Medium |

| 🌏 Global | OECD Principles | Voluntary | Ethical | Human-centric AI | Medium |

| 🌏 Global | AI Convention | Treaty | Rights-based | Human rights, alignment | Med–High |

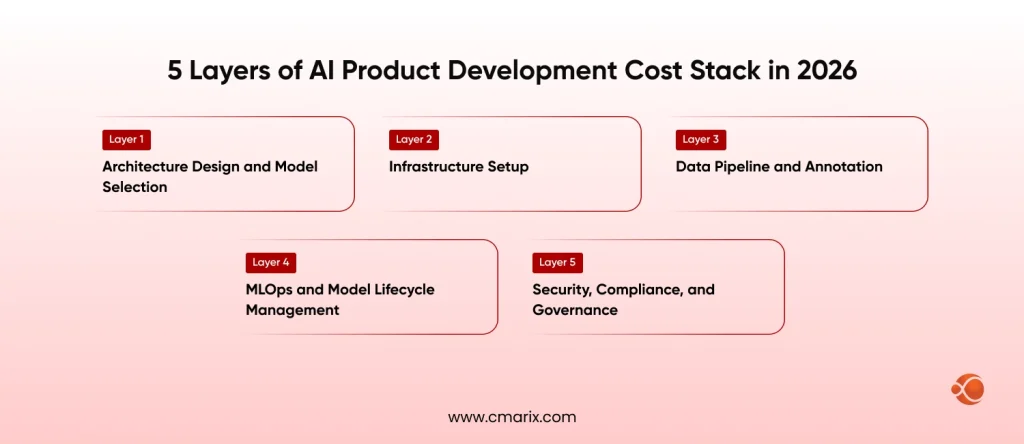

The 2026 AI Product Development Cost Stack: A Layer-by-Layer Breakdown

Layer 1: Architecture Design and Model Selection ($15,000 – $80,000)

The single most consequential cost decision happens before you write a single line of code, and that cost is related to choosing your AI architecture.

In 2026, the dominant architecture debate is choosing between RAG and fine-tuning architectures. Both approaches have different cost profiles:

| Architecture | Best For | Upfront Cost | Ongoing Cost |

| RAG | Dynamic knowledge bases, enterprise search | Lower | Vector DB hosting, retrieval latency |

| Fine-Tuning | Domain-specific precision, low-latency inference | Higher (GPU compute) | Re-training cycles |

| Hybrid | Production-grade products at scale | Highest | Moderate, optimizable |

The choice between private vs. public AI models for security purposes is yet another key aspect to consider. Public API-based products such as OpenAI, Anthropic, and Gemini have lower acquisition costs with unpredictable per-token pricing.

The self-hosted AI alternatives run open-source models such as LLaMA, Mistral, or Falcon on one’s own computer, which requires a greater investment in infrastructure but provides the assurance of a cost ceiling.

- Architecture consultation and design: USD 15,000 – USD 40,000

- Model evaluation and benchmarking sprints: USD 8000 – USD 20,000

- AI Proof of concept (if needed): $12,000 – $30,000

For better insights, check out the detailed enterprise cost breakdown of self-hosted AI versus Open AI APIs.

Layer 2: Infrastructure Setup ($40,000 – $350,000+)

This is the layer that most cost estimates undercount, sometimes to a concerning degree.

AI infrastructure in 2026 is not just “renting some cloud servers”. It is a distributed system of GPU clusters, vector databases, agentic AI orchestration layers, caching infrastructure, and observability tooling that must be designed for both cost efficiency and horizontal scale. At this stage, teams can use a simple workflow tool to create video to turn these architecture components into quick visual explainers for stakeholders and engineering onboarding.

The enterprise AI infrastructure playbook for 2026 typically includes:

GPU/TPU Compute (Training): Training a domain-specific model from scratch on a 40-billion-parameter base requires thousands of GPU hours. On AWS (A100 Clusters), that translates to an estimated cost of USD 2.50-4.00 per GPU hour. A domain-specific fine-tuning run on a large model can cost around USD 8,000 – USD 60,000 in compute alone. Organizations deploying private LLMs on AWS, Azure, and on-premises servers must factor in reserved instance commitments to make these numbers manageable.

Inference Infrastructure: Production inference is the cost that never stops. A mid-scale AI product serving 50,000 daily active users will typically consume:

- 4–8 GPU nodes for inference serving

- Load balancer + auto-scaling groups

- Caching layer (Redis or equivalent) to avoid redundant model calls

- CDN for latency optimization across geographies

Monthly inference infrastructure spend for a mid-scale product: $12,000 – $45,000/month.

The Kubernetes + Microservices architecture stack is now the baseline for cost-efficient AI infrastructure. Scale-to-zero capabilities and spot instance strategies can reduce inference overhead by 30–45%, but require skilled DevOps automation services and architecture investment upfront.

Vector Database Hosting: Tools like Pinecone, Weaviate, Qdrant, or pgvector are used to store and search AI data (like embeddings for RAG systems).

Running these in production means:

- Storing large amounts of data

- Handling fast searches

- Keeping the system reliable

Because of this, it usually costs around $800 to $6,000 per month, depending on how much data is stored and how often it is used.

Networking and Data Transfer: This is yet another overlooked factor affecting the cost of AI investment. Data egress charges between AI services, storage, and inference endpoints. For data-intensive products, this can add around USD 2,000-15,000/month at scale.

Need clarity on your AI infrastructure costs before they scale out of control?

Get a tailored architecture and cost breakdown aligned with your product vision.

Layer 3: Data Pipeline and Annotation (USD 20,000 – USD 200,000)

This layer is deliberately not a headcount rate table; CMARIX’s AI app development cost guide covers individual developer hourly rates and role breakdowns in greater detail. What matters here is the structural decision about how you organize the build, because that decision shapes the total cost more than any individual talent hire.

Full In-House Infrastructure Build

Building an entire AI infrastructure team means hiring for roles that do not traditionally exist as a generalist headcount position: MLOps engineers, LLM platform engineers, data pipeline architects, and AI product managers with infrastructure expertise. A minimum team of five infrastructure-focused specialists has a fully loaded cost of $800,000-$1,100, including salaries, benefits, tooling, and hiring costs. Time to full productivity: 6-9 months.

This model works when the AI system itself is core proprietary IP, when there are 18 to 24 months of runway before revenue pressure, and when long-term internal ownership of all infrastructure layers is a non-negotiable strategic requirement.

Specialist Development Partner

Having a dedicated AI development partner like CMARIX means immediate access to pre-assembled infrastructure teams, MLOps engineers, LLM experts, and platform architects. There is no hiring lag, no costs associated with full-time commitment.

| Engagement Scope | Investment Range | Typical Timeline |

| AI Infrastructure Architecture + POC | $25,000 – $70,000 | 6–10 weeks |

| Production AI Product (infra + MLOps) | $120,000 – $350,000 | 16–28 weeks |

| Enterprise AI Platform (multi-model, compliant) | $350,000 – $700,000+ | 6–12 months |

Hybrid Transition Model

The most cost-efficient business model for most growth-stage businesses: a specialized partner like CMARIX builds the infrastructure foundation, then hands it over to a smaller internal team that owns it for the long term. This approach saves 40% to 60% of the initial build time that a traditional hiring process for a full in-house implementation would require.

CMARIX’s AI-powered MVP development is structured for exactly this transition, delivering working inference pipelines and MLOps foundations within 8–12 weeks, with architecture documentation and knowledge transfer built into the delivery model.

Layer 4: MLOps and Model Lifecycle Management ($50,000 – $120,000/year)

This is the post-launch cost category that almost no initial budget includes — and it is not optional for any production AI product.

Model Monitoring

The degradation of AI model performance is not immediately noticeable. The AI model’s performance could degrade in a matter of weeks. Degradation is often noticed when users begin to complain or when business metrics decline. There are tools available to monitor degradation, such as Evidently AI, Arize Phoenix, and WhyLabs. The tools could cost anywhere between $500 and $5,000 a month, depending on the volume.

Retraining Pipelines

Scheduled or trigger-based retraining requires orchestrated compute, versioned datasets, experiment tracking, and CI/CD pipelines adapted for model artifacts rather than code. Initial pipeline setup with MLflow, Kubeflow, or SageMaker Pipelines: $15,000 – $40,000. Ongoing compute and engineering: $3,000 – $12,000/month.

Experiment Tracking and Model Registry

Weights & Biases, MLflow, or Comet ML: $200 to $2,000/month, depending on team size and number of experiments. Total MLOps lifecycle management costs for the first year: $50,000 to $120,000 with pipeline engineering, tooling, monitoring, and computation consolidation.

Layer 5: Security, Compliance, and Governance ($20,000 – $150,000)

As McKinsey points out, 49% of enterprises currently report measurable cost savings from implementing AI in service operations. However, these cost savings occur only if the AI product is built on a compliant foundation. Compliance is not a differentiator in 2026. It is the new baseline for enterprise sales.

NIST AI RMF Engineering

Typically, your organization will expect you to comply with NIST’s AI Risk Management Framework (Govern, Map, Measure, Manage) at the enterprise level. The up-front cost to complete the gap assessment, deploy controls, and produce related documentation is $15k-$40k. In addition, to meet Audit Readiness requirements, you can expect to pay $8k-$20k annually.

Data Privacy and Residency Architecture

There are specific technical requirements associated with each of these laws, including: encryption (at rest and in transit), data storage location / residency, auditing, and proper data disposal (right-to-erasure pipelines). The initial cost to comply with these laws (the engineering only) for a healthcare AI application is estimated to range from $10k to $30k, plus ongoing monthly compliance engineering costs (to maintain compliance with these laws) of $3k to $8k.

AI Red-Teaming and Safety Audits

Before signing an enterprise contract or deploying into a regulated sector, companies must conduct an opposing-input test, evaluate compatibility with jailbreaks, or audit for toxicity in their outputs. The cost for an outside audit typically ranges from $8k – $25k per cycle.

The Hidden Cost Layer: What Appears After Launch

Based on CMARIX’s experience in providing end-to-end AI software development solutions, these are the cost categories that surface reliably after initial budgets get approved and initial launches are completed:

| Topic | What It Means | Estimated Cost | Key Insight |

| Poor Token Management | Poor prompts use more tokens than needed | $5,000 – $40,000/month | Optimize prompts to reduce API cost |

| Vendor Lock-In | Hard to switch from one AI provider to another | $20,000 – $80,000 (one-time) | Plan flexible architecture early |

| Agent Complexity | Multiple AI agents + tools increase system complexity | $15,000 – $50,000 | Design system properly from start |

| Feedback System | Collecting user feedback to improve AI responses | $10,000 – $30,000/year | Needed to improve AI over time |

Comparing Generative AI vs. Traditional ML: Comparing The Infrastructure Cost

The choice between building a Generative AI product and a traditional ML product carries significant infrastructure cost implications that extend well beyond model selection.

| Cost Dimension | Traditional ML | Generative AI |

| Training Compute | Moderate – takes days to weeks on GPUs | Very high – can take weeks on large GPU clusters |

| Inference Cost (per query) | Low – fast and inexpensive | High – token-based, expensive at scale |

| Vector Database | Rarely required | Commonly required (for RAG systems) |

| MLOps Complexity | Standard retraining pipelines | More complex – includes RLHF, safety checks |

| Compliance Engineering | Basic data protection | Advanced – includes hallucination control, output auditing |

| Monitoring | Standard metrics (accuracy, latency) | Advanced – semantic drift, toxicity, factual accuracy |

| Year 1 Infrastructure Cost (mid-scale) | $80,000 – $200,000 | $200,000 – $500,000+ |

Generative AI products require 2–3x the infrastructure investment of equivalent traditional ML products, not because models are more complex to train, but because the operational surface area of a language model in production is categorically larger.

Structuring the Build vs. Outsource Decision

This is the strategic inflection point that determines Year 1 total cost more than any individual budget line.

Build the infrastructure in-house when:

- The AI system is core proprietary IP with specific security or data sovereignty requirements.

- An existing platform engineering team with MLOps experience is already in place.

- Timeline allows 12–18 months for team assembly, onboarding, and infrastructure build.

- Long-term internal ownership of every infrastructure layer is a non-negotiable requirement.

Partner with a specialist firm when:

- A production-ready infrastructure stack is needed within 12–20 weeks.

- The engineering team has application experience but not MLOps or LLM infrastructure depth.

- Commercial performance needs to be validated before committing to a full internal infrastructure team.

- Cost certainty matters more than team ownership in the near term.

Use the hybrid transition model when:

- Speed-to-market is the immediate priority, but long-term internal ownership is the end goal.

- Hiring into a production-ready codebase is preferable to building the foundation from scratch.

- The budget supports a partner-led build, followed by a smaller internal expert AI software development team taking ownership.

CMARIX offers strategic AI consulting engagements, including infrastructure architecture assessments, build-vs-buy analyses, and phased roadmaps, specifically designed to answer this question before a budget is committed.

Infrastructure Cost Reference Ranges: 2026

These ranges represent infrastructure, MLOps, compliance, and architecture layers only—excluding developer hourly rates, UI/UX, QA, and project management costs, which are covered in CMARIX’s AI app development cost guide for enterprise software engineering.

| Product Scale | Year 1 Cost (Infrastructure + MLOps + Compliance) |

| AI feature on existing product (low traffic) | $30,000 – $90,000 |

| Standalone AI product (MVP, under 10K DAU) | $80,000 – $180,000 |

| Production AI product (50K+ DAU, full MLOps) | $200,000 – $450,000 |

| Enterprise AI platform (multi-model, compliance-ready) | $450,000 – $1,000,000+ |

Infrastructure costs scale with user volume and query complexity – not linearly with team size, which is what makes them consistently surprising to teams budgeting for AI products for the first time.

Conclusion: Infrastructure Is Strategy, Not Overhead

Most often, scaling failures in AI models are not due to the model itself but rather to a lack of scalable infrastructure for production use, monitoring for drift, engineering for compliance, and optimization to avoid unbudgeted, accumulating cloud costs. This is why you need to rely on a team with expertise in cloud cost optimization best practices.

Infrastructure selections made during AI’s first week of product development determine inference costs in AI year three. The prior architectural decisions determine how complex MLOps will be over the life of the product; they are not just engineering issues but strategic cost decisions masquerading as technology choices.

CMARIX provides product teams with a complete understanding of their AI infrastructure costs from Sprint One as they architect, develop, and run their AI infrastructure. CMARIX’s customers understand what their infrastructure costs will be for custom generative AI development and DevOps automation, the development of Python-native AI pipelines, and ensuring their enterprise is compliant and ready for the future. If you are scoping an AI product development project and need to get an idea of infrastructure costs before committing to a build approach, the first step would be to conduct an architectural assessment with CMARIX as the assessors.

FAQs Related to the Cost of Developing an AI Product

What is the average cost to build a production-grade AI product in 2026?

A production-grade AI product demands robust architectures, MLOps, and scalable APIs. The biggest drivers for investment in a production-grade AI product are complexity, regulatory requirements, and multi-model integration.

Why do infrastructure costs often exceed initial estimates?

The unpredictable nature of AI systems, such as GPU consumption, data growth, and real-time processing demands, is a key factor that makes infrastructure costs higher than budgeted.

How much should I budget for ongoing maintenance?

Maintenance includes monitoring, retraining, scaling, and handling data drift. The models need continuous updates to maintain stability in the production environment.

What are the primary cost differences between Generative AI and traditional ML?

The cost of generative AI is higher than that of traditional ML because generative AI models are complex, especially when they are scaled up. In contrast, traditional ML models are based on structured data, so their cost is low.

How does data quality impact the final price tag?

Poor quality data results in multiple iterations of cleaning, labeling, and retraining the models. Good quality data helps speed up development, reducing the time taken for retraining models.

Is it cheaper to build in-house or outsource AI development in 2026?

In-house development provides control but also requires expertise within the company. It is a time-consuming process. Outsourcing is a quicker option with the availability of skilled resources and a well-structured delivery framework.