Quick Summary: AI risks are no longer a future problem; they’re happening now. From deep poisoning and prompt injection to deepfakes and regulatory pressure, it’s almost everywhere. This guide breaks down every major risk category, what’s driving them, and how organizations can build smarter defenses before the next incident forces their hand.

Let’s be straight: AI is no longer a trend. It’s running supply chains, approving loans, writing legal documents, and helping diagnose patients. And while that’s genuinely impressive, it also means the failure modes have never been more expensive.

As per PwC, AI would contribute $15.7 trillion by 2030 to the global economy. That scale brings both opportunity and a growing list of AI security risks that organizations cannot afford to dismiss. The companies building fast are not always building carefully. Governance structures are lagging behind the technology. Regulatory bodies are still catching up. And threat actors? They’ve already started exploiting the gaps.

This blog breaks down the most important AI risks across five categories, explains how they work in simple terms, and outlines what businesses and governments can realistically do about them.

The Rapid Growth of Artificial Intelligence

In just a few years, AI adoption has moved from pilot projects to mission-critical infrastructure. AI models are much more capable, deployment costs have dropped, and the range of use cases has expanded. What started as recommendation engines is now living inside legal workflows, autonomous agents, and financial modeling, making real-time decisions.

Why Understanding AI Risks Is Important

The same capabilities that make AI useful also make it exploitable. Misalignment, misuse, and technical failure are no longer theoretical. They’re showing up in data breaches, regulatory fines, and public incidents. Businesses that treat AI risk as an IT footnote are working with a blind spot.

Our experts help you identify the gaps before they become incidents.

Talk to CMARIXThe Increasing Dependence on AI Systems

Across healthcare, finance, legal, and defense, AI has become embedded in decision pipelines that once required human judgment. That dependence is growing faster than most teams’ ability to audit, explain, or course-correct the models driving those decisions.

The Current State of AI Adoption

AI has moved well past the pilot stage. It’s now embedded in core business operations across nearly every industry, often running quietly in the background of decisions that used to require human judgment. Here’s where things stand across three key dimensions:

- AI expansion across industries. Sectors like Healthcare, finance, and legal all depend on AI for decisions that directly affect people. When the model gets it wrong, the consequences go beyond cost.

- How are businesses using AI today? AI now runs customer service, HR screening, fraud detection, and content generation at scale, often with little human review of outputs.

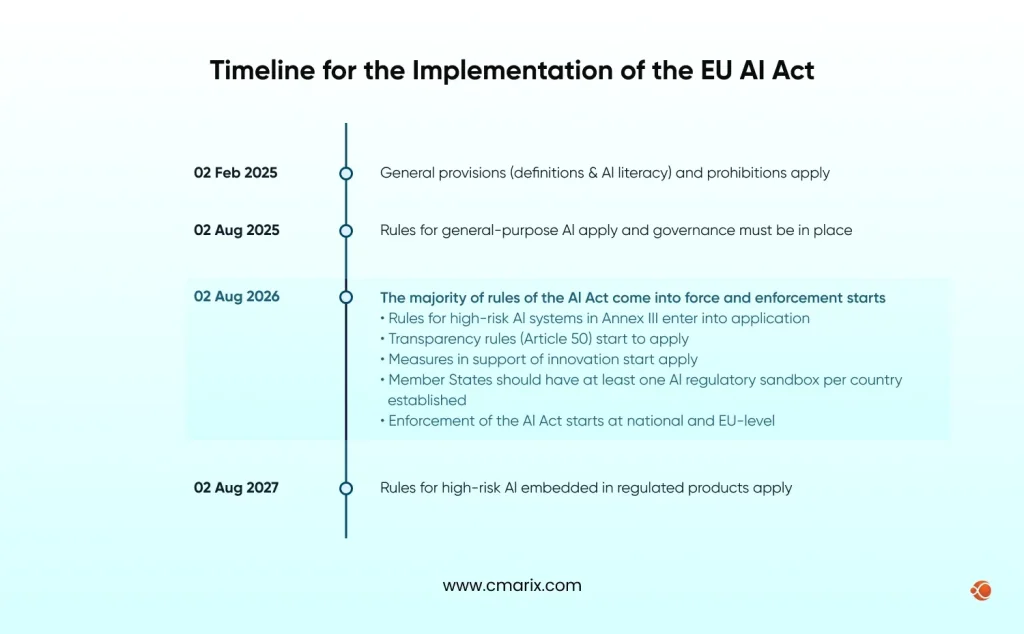

- Why is risk awareness important? Well, the EU AI Act’s August 2, 2026, deadline makes transparency rules for generative AI enforceable law. Competitive pressure and liability gaps are pushing organizations to act before regulators force them to.

| Category | Risk | What It Means | Industries / Sectors Impacted |

| Ethical & Social Risks | Bias and Discrimination | AI models trained on historical data can reproduce existing inequalities in hiring, lending, and moderation systems. | HR & Recruitment, Banking & Lending, Insurance, Social Media Platforms |

| Privacy Concerns | AI systems rely on large volumes of personal data, increasing the chance of misuse or exposure. | Healthcare, Finance, E-commerce, Government Services | |

| AI Hallucinations | Large language models may generate incorrect information while sounding confident. | Healthcare, Legal Services, Financial Advisory, Customer Support | |

| Job Displacement | AI automation is affecting writing, coding, analysis, and customer support roles simultaneously. | Media & Publishing, IT & Software Development, Customer Service, Marketing | |

| Misinformation and Deepfakes | AI tools can generate realistic fake video, audio, and text content. | Media & Journalism, Politics & Elections, Financial Markets, Public Relations | |

| Ethical and Accountability Issues | Responsibility for AI-driven harm is often distributed across developers, vendors, and deploying organizations. | Government & Policy, Legal Sector, Enterprise Technology Providers | |

| Technical & Security Risks | Lack of Transparency (Black Box Problem) | Many advanced AI models produce outputs without clear explanations of how decisions were made. | Healthcare Diagnostics, Insurance Underwriting, Finance, Risk Management |

| Security Vulnerabilities in AI Systems | AI infrastructure introduces new attack surfaces through data pipelines, APIs, and compute environments. | Cybersecurity, Cloud Infrastructure, Enterprise SaaS, Financial Systems | |

| Data Poisoning Attacks | Attackers manipulate training data to influence how a model behaves after deployment. | Autonomous Systems, Fraud Detection, Recommendation Engines, Defense | |

| Prompt Injection Attacks | Malicious prompts manipulate AI systems into performing unintended actions. | AI Assistants, Enterprise Automation, Customer Support Bots, Developer Tools | |

| Model Theft or Extraction | Attackers reconstruct a model’s behavior or internal logic by repeatedly querying it. | AI Product Companies, SaaS Platforms, Research Organizations | |

| Data Theft and Unauthorized Access | AI systems often store or process large amounts of sensitive data. | Healthcare, Finance, Government, Enterprise Data Platforms | |

| Operational & Systemic Risks | Model Collapse | Training models on AI-generated content can reduce accuracy and diversity over time. | Search Engines, Content Platforms, Research Organizations |

| Emergent Behavior in Advanced AI | Large models may develop unexpected capabilities or behaviors not seen during testing. | Autonomous Systems, Defense, Advanced AI Research, Enterprise Automation | |

| Human Dependency Risk | As AI systems become more accurate, human oversight may weaken. | Healthcare Decision Support, Aviation Systems, Financial Risk Analysis | |

| AI Supply Chain Vulnerabilities | AI systems depend on third-party models, datasets, and open-source components. | Cloud Platforms, Enterprise Software, AI Startups | |

| Infrastructure & Environmental Risks | Energy Consumption and Carbon Footprint | Training and operating large AI models require significant energy. | Cloud Providers, Data Centers, Large Tech Companies |

| Water Usage in AI Data Centers | Cooling infrastructure in AI data centers requires large volumes of water. | Data Center Operators, Cloud Infrastructure Providers | |

| Gap Between Green AI Goals and Reality | AI compute demand is growing faster than renewable energy adoption. | Technology Companies, Cloud Providers, ESG-regulated Enterprises |

Key AI Risks and Challenges to Watch in 2026

Ethical and Social Risks

Bias and Discrimination

Models trained on historical data reproduce historical inequities. Algorithmic bias auditing exists because this shows up in hiring tools, credit scoring, and content moderation; often without anyone realizing the model is the problem.

Why it’s a risk:

- Biased outputs can violate anti-discrimination laws and expose organizations to legal liability.

- Once deployed at scale, biased decisions compound quickly before anyone flags the pattern.

- Errors are hard to detect because the model performs well on aggregate metrics while consistently failing particular groups.

Privacy Concerns

AI systems consume huge amounts of personal data, and the consequences are already being recorded. The OECD AI Incidents Monitor tracks real-time AI-related harms globally, and privacy violations consistently rank among the most frequently reported. Without strong governance, exposure under HIPAA, GDPR, and state-level regulations is not a future risk. It’s a present one.

Why it’s a risk:

- A misconfiguration in the data pipeline can convert an AI system into a privacy breach at scale.

- Training data carries personal information that the model can accidentally replicate in its output.

- Users are not aware of the use of their data and the gaps in compliance and trust.

AI Hallucinations

LLMs usually generate false information with the same confidence as accurate information. In low-stakes settings, that’s a nuisance. In medical, legal, or financial contexts, it’s a direct liability.

Why it’s a risk:

- Users often can’t distinguish hallucinated content from accurate content without independent verification.

- Current mitigation techniques reduce hallucination rates but do not remove them.

- Downstream systems that consume AI outputs can amplify a single hallucination across many decisions.

Job Displacement

The difference with AI is scope and speed. White-collar roles in writing, analysis, coding, and customer support are being affected simultaneously. The displacement is also not even: the UNESCO recommendation on the ethics of AI highlights a growing digital divide, where communities in the Global South face disproportionate harm from AI bias and job losses with far fewer resources to adapt.

Why it’s a risk:

- Displacement is occurring across multiple industries simultaneously, making it difficult for individuals to transition between industries.

- Instability can occur when the rate of displacement outweighs the capacity to support it.

- New jobs that are being created with the help of AI have different skill requirements compared to the jobs that it is eliminating.

Misinformation and Deepfakes

Synthetic media forensics is now a legitimate discipline because tools for generating convincing fake video, text, and audio are widely accessible. The International AI Safety Report 2026, backed by 30+ nations, specifically flags agentic AI as an accelerant of misinformation and cybersecurity threats, operating at a scale and speed that human teams can’t match.

Why it’s a risk:

- Deepfakes are indistinguishable from real media, and this has resulted in a lack of trust in audio and video evidence.

- Automated disinformation can be tailored and deployed more quickly than fast-checking organizations can react to it.

- Financial markets, election cycles, and public health are critical areas of concern for coordinated synthetic media attacks.

Ethical and Accountability Issues

When an AI system causes harm, accountability is often distributed across multiple parties. The data team, deploying organization, model team, and end user all carry partial responsibility, and legal frameworks haven’t caught up. The UN’s Governing AI for Humanity report specifically warns of growing risks to peace, security, and global democracy through 2030 as this accountability gap widens across borders. A concern that extends directly into AI surveillance software development, where transparency and oversight requirements are still largely undefined.

Why it’s a risk:

- No single party is clearly responsible, which means affected individuals often have no clear path to recourse.

- Vendors frequently disclaim liability through terms of service, leaving deploying organizations exposed.

- The faster organizations deploy AI, the harder it becomes to reconstruct decision trails after something goes wrong.

Technical and Security Risks

Lack of Transparency (Black Box Problem)

High-performing models are frequently uninterpretable. You can see the output but not the reasoning behind it. That makes auditing for bias, failure diagnosis, and regulatory compliance significantly harder.

Why it’s a risk:

- Regulators in finance, healthcare, and insurance increasingly require explainable decisions, which black-box models can’t provide.

- Without interpretability, teams can’t identify when a model has quietly started producing wrong outputs.

- Explainability gaps make it difficult to defend AI-driven decisions in legal or audit contexts.

Security Vulnerabilities in AI Systems

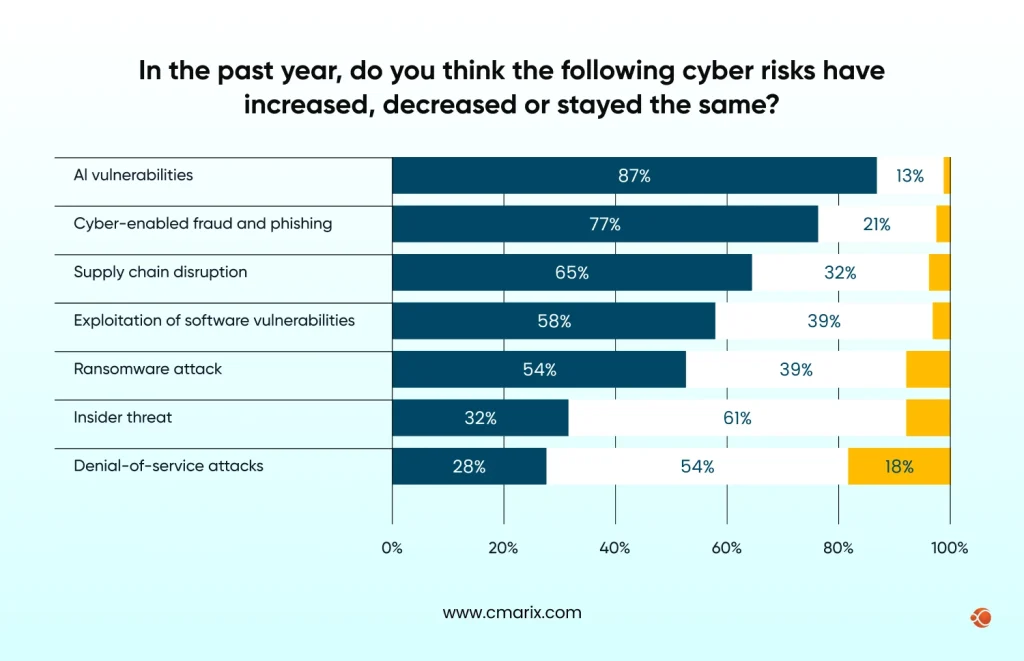

AI security risks go beyond standard software vulnerabilities. According to the WEF Global Cybersecurity Outlook 2026, 87% of business and security leaders now view AI-related vulnerabilities as their fastest-growing risk. AI infrastructure spans data pipelines, compute environments, and APIs, each of which is a different attack surface.

Why it’s a risk:

- Security testing for AI systems requires different kinds of approaches than traditional software testing, and most organizations haven’t adapted.

- AI APIs expose model capabilities externally, making them targets for abuse, probing, and exploitation.

- Misconfigured cloud infrastructure around AI workloads is a common source of unauthorized access.

Data Poisoning Attacks

Adversarial machine learning includes attacks where bad actors corrupt training data to manipulate how a model behaves after deployment. By the time the effect surfaces, the model is already in production.

Why it’s a risk:

- The poisoned model can behave normally in all conditions except for a few, where it will fail.

- Retraining from clean data is a costly and time-consuming operation.

- Poisoning requires audit trails for all training data, which is not so available for many models

Prompt Injection Attacks

Agentic AI autonomy expands into systems that take real-world actions; the impact of AI agents on cybersecurity grows with it, both as a threat vector and defense tool.

Why it’s a risk:

- Agents taking real-world actions (sending emails, querying databases, executing code) amplify the damage of a successful injection.

- Standard input validation doesn’t catch prompt injection because the malicious content is semantically valid text.

- Defense is still an open research problem with no fully reliable solution available today.

Model Theft or Extraction

By querying a model systematically, an attacker can reconstruct its behavior or weights. For organizations with proprietary models trained on sensitive data, this is both an IP and a competitive risk.

Why it’s a risk:

- Extracted models can be used to find adversarial inputs that fool the original system.

- Proprietary training data embedded in model weights can be partially recovered through extraction.

- Rate limiting and query monitoring alone are insufficient to prevent determined extraction attempts.

Data Theft and Unauthorized Access

AI systems are trained and given access to sensitive data to create concentrated risk. A single breach can expose large amounts of proprietary or personal information, often before anyone detects it.

Why it’s a risk:

- AI systems are granted broad data access to function effectively, which creates a large blast radius if compromised.

- Logs and audit trails for AI data access are often less mature than those for traditional systems.

- Regulatory penalties for AI-related data breaches are increasing as governments close gaps in existing frameworks.

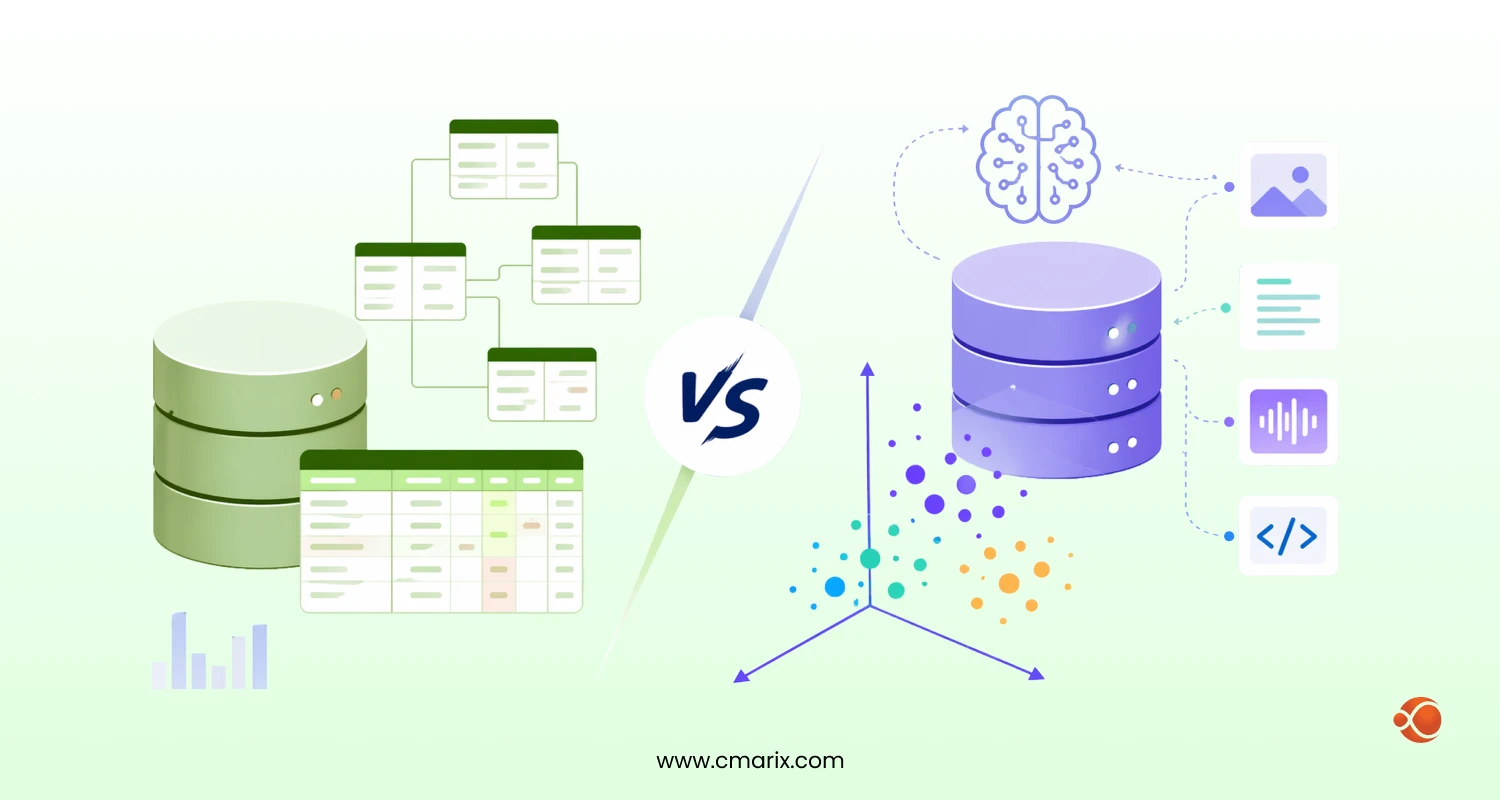

Operational and Systemic Risks

Model Collapse

As AI-generated content saturates the internet, models retrained on that content start to degrade. The feedback loop produces outputs that are less accurate, less diverse, and less reliable over time.

Why it’s a risk:

- Organizations that depend on web-scraped training data will increasingly ingest AI-generated content without knowing it.

- Model collapse is slow and hard to detect until output quality has already deteriorated significantly.

- There are no industry-wide standards yet for flagging or filtering synthetic content from training pipelines.

Emergent Behavior in Advanced AI Systems

Sometimes larger models develop capabilities their creators didn’t anticipate or test for. These behaviors are hard to predict before they appear and hard to contain once they do.

Why it’s a risk:

- Emergent behaviors can include unexpected generalization or deceptive outputs that undermine safety assumptions.

- Standard pre-deployment testing doesn’t cover capabilities that don’t exist at smaller model scales.

- Once a model is deployed at scale, rolling back to address emergent issues is operationally disruptive and costly.

Human Dependency Risk

As the AI gets more accurate, human reviewers stop paying close attention. Human-in-the-loop (HITL) governance becomes a checkbox instead of genuine control, and the oversight meant to catch errors quietly disappears.

Why it’s a risk:

- Automation bias causes humans to defer to AI outputs even when something looks wrong.

- As teams shrink review capacity, assuming AI handles it, the organization loses the skills to catch AI errors independently.

- Compliance frameworks that require human review often don’t specify what meaningful review actually looks like.

AI Supply Chain Vulnerabilities

Most AI systems depend on third-party models, datasets, libraries, and APIs. A vulnerability anywhere in that chain propagates to every product built on top of it, often without the deploying organization knowing. This is especially true for cloud-dependent stacks, where secure enterprise Azure AI integration becomes a direct line of defense against third-party risk at the infrastructure level.

Why it’s a risk:

- Organizations have limited visibility into the security practices of their AI component vendors.

- A compromised open-source model or dataset can affect thousands of downstream deployments simultaneously.

- Standard software supply chain tools and processes don’t translate directly to AI model provenance and integrity checks.

Infrastructure and Environmental Risks

Energy Consumption and Carbon Footprint

Training large foundation models can consume as much energy as several transatlantic flights. The Stanford HAI 2025 AI Index documents a sharp rise in reported AI incidents alongside growing environmental costs, making energy accounting as important as compute budgeting.

Why it’s a risk:

- Energy-intensive AI workloads are straining power grids in regions with high data center density.

- Carbon disclosure requirements are beginning to include AI compute, creating reporting obligations.

- Organizations with sustainability commitments face growing tension between AI ambitions and emissions targets.

Water Usage in AI Data Centers

Cooling AI data centers requires substantial water consumption. In water-stressed regions, this is increasingly a regulatory and community relations issue, not just an operational cost.

Why it’s a risk:

- Water usage disclosures are becoming a regulatory requirement in various US states and EU jurisdictions.

- Some of the large data centers consume millions of gallons of water per day, competing with local municipal and agricultural needs.

- Water shortage can directly threaten data center operations in drought-prone regions, making business continuity risky.

The Gap Between Green AI Goals and Reality

Major AI companies have made public sustainability commitments. Most are struggling to meet them as demand for computing continues to grow faster than the shift to renewable energy sources.

Why it’s a risk:

- Clean energy supply can’t be built fast enough to match the pace of AI infrastructure expansion.

- Greenwashing in AI energy claims is drawing concerns from regulators and ESG-focused investors

- Carbon offset strategies mask rather than reduce the actual emissions from AI workloads.

Want to validate your AI idea without taking on unnecessary risk?

CMARIX builds secure, production-ready AI MVPs for enterprises — so you move fast without cutting corners.

Explore AI MVP DevelopmentHow Organizations and Governments Can Mitigate AI Risks in 2026

Implementing Responsible AI Governance

Governance starts with accountability structures: who owns AI risk, how decisions get escalated, and what happens when something goes wrong. Evaluating AI use-case suitability before deployment is one of the most efficient ways to catch risk early. Strong enterprise data privacy services should be part of that foundation, not a separate workstream. AI risk assessment consultants help organizations map their AI exposure before it becomes a regulatory or reputational problem.

Strengthening Data Quality and Security Controls

Well-governed data is the foundation of reliable AI. That means access controls, regular audits, and provenance tracking. Understanding the full AI system cost breakdown, including data infrastructure, helps businesses focus on where controls matter the most. Those building for regulated industries should look at dedicated, secure AI application development practices from the ground up.

Improving Transparency and Explainability

Where model decisions carry real consequences, explainability is not a nice-to-have. It’s how you audit for bias, comply with regulations, and maintain user trust. Development teams should invest in interpretability tooling and documentation alongside model development. Which is exactly why secure AI development for privacy-first solutions treats transparency as an architectural requirement rather than an afterthought.

Continuous Monitoring and AI Auditing

Models drift, data distributions shift. What performed well in testing may behave differently in production six months later. Teams that hire Python developers for secure ML pipelines build monitoring in from the start rather than adding it after incidents occur. Dedicated QA testers for AI models and continuous monitoring pipelines are the operational answer to a problem that doesn’t go away after launch.

Promoting Human Oversight in AI Decision-Making

Meaningful human oversight means humans who have the context, authority, and time to intervene; not a checkbox. Organizations that hire expert AI developers for secure model deployment design override workflows into the architecture from day one, not as an afterthought.

The Future of AI Risk Management

AI risk isn’t a problem you solve once. The patterns are shifting, and so is what good risk management needs to look like. Here’s a side-by-side view of where things are heading and what organizations should be doing about it.

| Category | Explanation |

| Agentic AI Risks | AI agents that take actions (not just predictions) can create failures that are harder to stop or reverse. |

| Human Control | Every agentic workflow should include a clear human override mechanism in the system architecture. |

| Expanding Threat Surface | Risks such as prompt injection, data poisoning, and model extraction remain unresolved as AI integrates into critical systems. |

| Security Discipline | Organizations should treat AI security as its own discipline with dedicated red-teaming and adversarial testing pipelines. |

| Regulatory Landscape | Laws such as the EU AI Act signal the start of global regulatory frameworks with different timelines and penalties. |

| Compliance Strategy | Regulatory requirements should be mapped during model design rather than added later, which increases cost and complexity. |

| Model Behavior Risks | Emergent capabilities, synthetic data collapse, and model drift can cause behavior changes over time. |

| Post-Deployment Monitoring | AI systems should be monitored after deployment to detect drift, anomalous outputs, and data distribution shifts. |

| Environmental Impact | Energy and water consumption from large AI systems are attracting regulatory and investor scrutiny. |

| Compute Planning | Organizations should account for energy and water usage and align model size and inference strategies with actual demand. |

Final Words

Artificial intelligence is indeed transformative. That’s not hype; that’s actually happening in real revenue numbers and real operational changes in almost every industry. But with transformation comes responsibility in proportion to that transformation.

The businesses taking AI security risks seriously now, developing governance structures, investing in transparent and auditing models, and secure deployment, are not just managing downside. They’re building the foundation that lets them move faster and with more confidence as the technology develops. If you’re ready to move in that direction, exploring generative AI security and risk mitigation services is a strong place to start.

FAQs on Emerging AI Risk and Challenges

What are the biggest AI security threats predicted for 2026–2030?

Agentic AI attacks, quantum-enabled decryption, and AI-generated deepfake fraud top the threat list. Alongside those, regulatory non-compliance and shadow AI deployments are becoming serious exposure points for enterprises of every size.

How will “Harvest Now, Decrypt Later” impact data privacy by 2030?

Adversaries are already collecting encrypted data today with the intent to decrypt it once quantum computing matures. Any sensitive data transmitted before post-quantum encryption standards are in place is potentially at risk by 2030.

What is the role of the EU AI Act in managing risks through 2030?

The EU AI Act sets binding requirements for risk classification, transparency, and human oversight across AI systems. Through 2030, it will function as the global baseline that other jurisdictions benchmark their own AI regulations against.

Can AI-generated deepfakes disrupt financial markets in the next five years?

Yes, and it’s already starting. Fabricated executive announcements and fake earnings calls can move stock prices before platforms detect the fraud. As deepfake quality improves, synthetic media forensics and real-time verification will become standard practice in financial communications.

What is “Shadow AI” and why is it a growing corporate risk?

Shadow AI means an AI tool employees use without IT or legal approval, often feeding sensitive company data into third-party models. It creates data leakage, liability, and compliance exposure that most organizations have no visibility into until something goes wrong.

Why is “Human-in-the-Loop” (HITL) essential for AI safety?

AI models can be confident and wrong. HITL keeps a qualified person in the decision chain for high-stakes outputs, providing the override capability that catches errors before they cause real harm in healthcare, legal, or financial contexts.