Quick Overview: AI investment is accelerating, yet measurable ROI often lags due to weak governance and cost modeling. Backed by industry research from Stanford HAI and MIT, this CFO-focused framework outlines structured evaluation gates to ensure AI delivers accountable, risk-adjusted financial returns.

Artificial intelligence has emerged as a board-level priority, which is transforming the investment, operational, and competitive landscape for enterprises.

Global spend on AI systems is predicted to exceed $2 trillion by 2026, yet many AI systems struggle to deliver tangible ROI. By 2025, 85% of AI projects will be biased, owing to bias in the data, algorithms, and/or the people implementing them.

To a CFO with a budget line item totaling millions of dollars in artificial intelligence investment, this gap is not a misunderstanding; it is a governance crisis waiting to happen. While the technology is certainly moving rapidly, to bridge this technology gap, there needs to be an overall enterprise AI guide as an asset for financial intelligence, not simply a code set.

The challenge is no longer whether to invest in AI, but how to evaluate, structure, and measure that investment with the same rigor applied to any capital allocation decision.

This framework is built for exactly that challenge. Drawing on patterns observed across enterprise AI

With deployments and the strategic expertise CMARIX brings to AI consulting and development, this guide provides CFOs and finance leaders with the tools to ask the right questions before, during, and after AI investments.

In This Blog, You’ll Get Answers To:

- Why do enterprise AI projects not produce an ROI despite having functioning technology?

- What is the Total Cost of Owning AI, and what is included in that number?

- How will CFOs review and approve AI investments by 2026?

- What metrics should you use to evaluate before implementing new AI technologies?

- What are the differences in calculating an ROI for Generative AI, Chatbots, and Agent-based AI?

- Is it more cost-effective to outsource the development of new AI technologies rather than to develop them internally?

- What are common errors made by CFO’s with respect to managing the ROI of AI investments?

- What methods does CMARIX use to structure its AI investment opportunities in order to achieve measurable returns?

💡 Not sure where your AI program stands financially?

CMARIX’s AI consulting team offers a no-obligation business analysis to identify gaps in your current AI investment structure, before they become budget overruns.

Why Does Enterprise AI Spending Underperform Despite Record Investment?

Despite the high level of capital inflow into the sector, the ROI of AI is difficult for most organizations to measure.

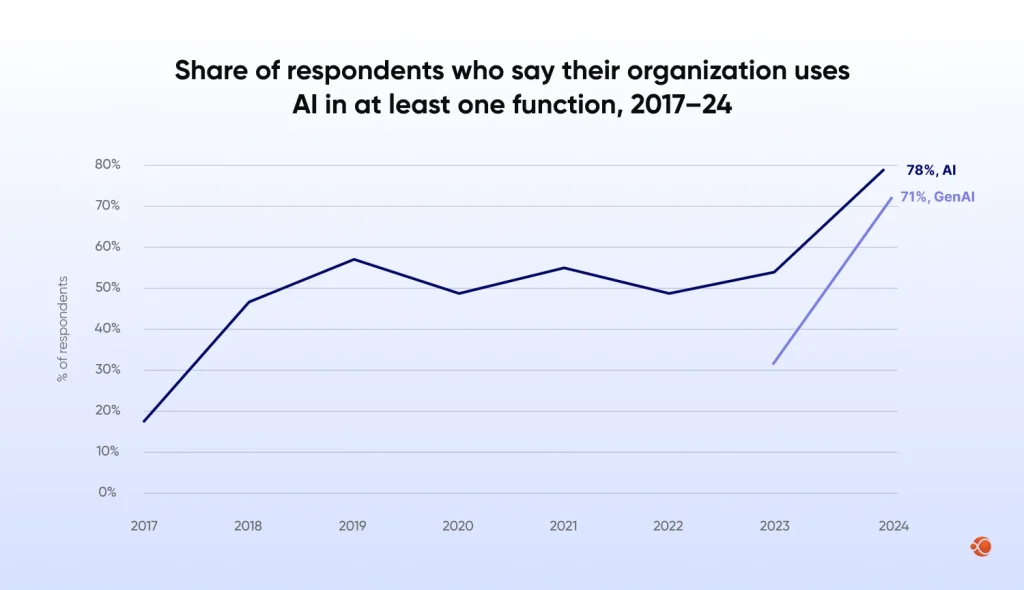

The 2025 report by the Stanford Institute for Human-Centered Artificial Intelligence found that 78% of organizations reported using AI in 2024, up from 55% the previous year. However, the report found that the overall ROI of AI use is low. The issue is not the technology but the framework.

The issue is not the technology but the framework. According to recent findings from the MIT 2025 AI Report, a staggering 95% of generative AI pilots fail to deliver tangible P&L results. This ‘GenAI Divide’ separates the experiments from the 5% of leaders who treat AI as an integrated workflow rather than a static project.

The Five Root Causes of AI ROI Failure

To establish a strong basis for a new AI ROI evaluation framework, it is important to first understand the reasons why investments in artificial intelligence fail to deliver results. Five root causes of ROI failure in enterprise artificial intelligence programs are:

- Strategy-Technology Mismatch: AI is used as a technology proof of concept rather than as a solution to a defined business challenge. The end result is a stunning tech demo with no operational value.

- Lack of Baseline Metrics: Without a defined performance metric prior to deployment, it becomes impossible to calculate ROI. It is impossible to measure progress from nowhere.

- Infrastructure Suboptimality: AI models running on outdated infrastructure simply do not scale. The expense of upgrading architecture mid-program often outweighs any potential ROI.

- Change Management Ignored: Real models will not deliver ROI without user acceptance and utilization. User acceptance of a model is an economic, not a human resource, consideration.

- Scope Creep Without Value Gates: AI projects grow without value gates to determine when to stop investing, as the sunk cost fallacy keeps investment going after it should have stopped.

CMARIX developers follow a systematic process, designed to close all five gaps before a single line of code is written.

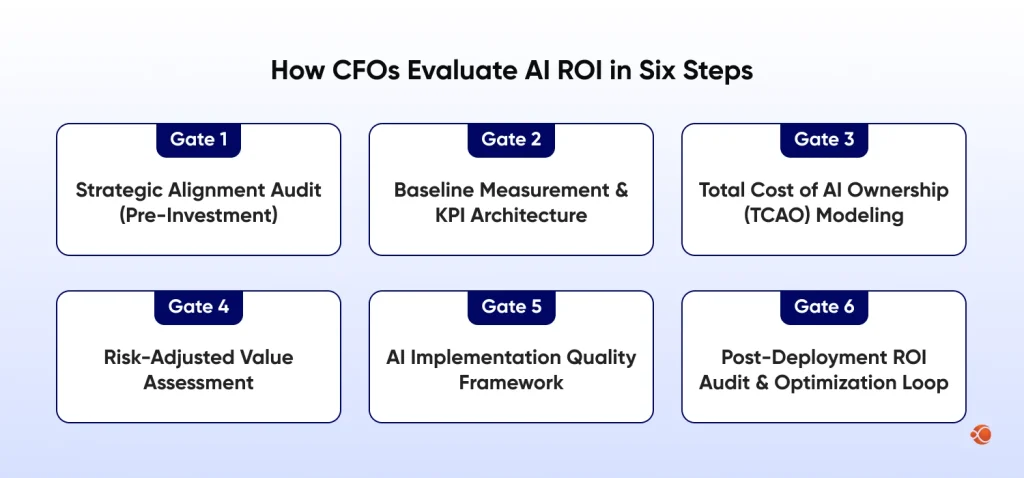

Contact UsThe CFO’s AI ROI Evaluation Framework: A Six-Gate Model

The ROI of AI investment in enterprises requires a systematic, multi-step evaluation process. The six-gate model below gives the CFO choices at every step of the AI investment process, from pre-approval to post-implementation optimization.

Gate 1: Strategic Alignment Audit (Pre-Investment)

The first question, before any money is invested, is: “Does this AI project have a direct correlation to a listed item on our corporate strategic plan?” A strategic alignment audit would seek to answer the following questions:

- Does this AI initiative address a named item on the corporate strategic plan?

- Can we articulate the specific business process, cost center, or revenue stream it will affect?

- Has the sponsoring executive committed to owning the business outcome, not just the technology delivery?

- What happens if we do nothing? What is the competitive cost of inaction?

Strategic alignment is not a soft criterion. Misaligned AI projects consume between 30-40% more budget than aligned ones, according to analysis from Boston Consulting Group, because they require constant re-scoping as business stakeholders reject deliverables that do not connect to their priorities.

For enterprises exploring where to begin, CMARIX’s AI consulting services include a structured workshop to build a strategic roadmap and competitive alignment, and to map AI capabilities to business value before any development commitment is made.

Gate 2: Baseline Measurement & KPI Architecture

The single most common reason CFOs cannot evaluate AI ROI after the fact is that no one measured the baseline before deployment. AI ROI evaluation requires a clearly defined “Before State” across every metric the initiative is expected to move.

A robust KPI architecture for AI investment should include three tiers:

| KPI Category | Metric |

| Operational KPIs | Process cycle time Error rate Throughput Headcount productivity |

| Financial KPIs | Cost per transaction Revenue per customer segment Margin per unit Support cost per ticket |

| Strategic KPIs | Customer satisfaction scores Time to market Competitive win rate Talent retention in AI-impacted roles |

All metrics need to be measured using a standardized methodology agreed upon by all stakeholders before launch. Adding measurement frameworks after a successful program launch can create attribution challenges.

Gate 3: Total Cost of AI Ownership (TCAO) Modeling

Evaluating AI investment ROI in enterprises requires complete cost visibility. Most AI business cases underestimate total cost by 40-60% because they exclude categories that only become visible post-deployment.

A comprehensive TCAO model for 2026 must include:

- Development and Integration Expenses: To develop a foundation model from scratch and integrate it with essential APIs/infrastructure, reliable data pipelines must be built, and proper security and compliance standards must be established to ensure the project’s consistent, successful functionality.

- Infrastructure Costs: AI doesn’t run on thin air. It requires cloud computing power, GPU resources for training and inference, secure storage, monitoring systems, and often the overlooked expense of preparing, labeling, and annotating data.

- Operational Costs: Once deployed, the work doesn’t stop. Your AI models need heavy maintenance, retraining with fresher data to maintain relevance, drift monitoring to maintain accuracy, and, most of all, proper human oversight to meet regulatory expectations and ensure ethical AI responses.

- Organizational Costs: AI adoption impacts people and processes. Teams need to be trained, many workflows need to be optimized for AI and redesigned, and there’s usually a temporary dip in productivity as the organization adjusts to new systems.

- Governance & Compliance Costs: As regulations become more stringent, organizations must spend on tools to support audit trails, explainability, and risk mitigation.

For organizations facing build-versus-buy or build-versus-partner decisions, effective budgeting for AI platform development is a core factor for making such strategic C-level decisions. CMARIX provides clear cost estimates for all TCAO categories prior to contract execution, preventing unexpected costs from arising mid-program.

CMARIX delivers transparent, end-to-end cost estimates across development, infrastructure, governance, and more before any contract is signed.

AI Cost EstimateGate 4: Risk-Adjusted Value Assessment

AI ROI framework analysis must account for risk, not just expected-case returns. A risk-adjusted value assessment applies probability weights to different outcome scenarios:

| Scenario | Probability | Financial Outcome | Probability-Weighted Value |

| Full Deployment Success | 25% | +$4.2M | +$1.05M |

| Partial Deployment (≈60% impact) | 40% | +$1.8M | +$0.72M |

| Limited Adoption (≈30% impact) | 25% | –$0.2M | –$0.05M |

| Program Failure | 10% | –$1.4M | –$0.14M |

| Risk-Adjusted Expected Value | — | — | +$1.58M |

Note: Illustrative model: actual values vary by initiative scope, sector, and organizational readiness.

The risk-adjusted model also requires an honest discussion of the probability of adoption, which is often treated as an assumption rather than an estimate in most AI business cases. If the risk-adjusted estimate is negative or low, the project must be reworked before approval.

For a risk-adjusted ROI model tailored to the initiative, sector, and readiness level, CMARIX’s AI consulting team can develop it during an initial engagement.

Explore MoreGate 5: AI Implementation Quality Framework

The ROI of AI is directly related to the quality of its implementation. This is where the science of designing an AI system for enterprise outcomes, not just technical success, differentiates high ROI initiatives from failed experiments.

An implementation quality framework evaluates five dimensions during and after build:

- Model Performance vs. Business Performance: A 98% accurate model that answers the wrong question will provide 0% business ROI. All technical metrics must correlate to a business outcome metric.

- Integration Depth: The value of AI multiplies when it is deeply embedded in existing processes. Shallow integration approaches (“AI as a dashboard”) will always perform worse than deep integration approaches (“AI as a decision engine within the process”).

- Data Quality Architecture: Garbage in, Garbage out. If your models are built on low-quality, editorialized, or old data, they will fail. A good data governance architecture is just as important to success as the model.

- Explainability & Trust: In regulated industries, AI outputs that cannot be explained to auditors, regulators, or end users cannot be deployed at scale. Explainability is a financial requirement, not a technical nicety. For instance, in highly regulated sectors, a successful healthcare AI deployment depends entirely on the model’s ability to provide explainable audit trails for clinical safety.

- Scalability Architecture: An AI system that works for 1,000 transactions but breaks at 1,000,000 has negative ROI at scale. Scalability must be designed in from the start.

Gate 6: Post-Deployment ROI Audit & Optimization Loop

The calculation of AI ROI is continual; it will always come after AI has been deployed. An audit after a project’s completion helps validate that the business case was achieved and provides data to optimize your project in perpetuity.

A structured post-deployment ROI audit should evaluate the following metrics at 30, 90, 180 day intervals.

| Metric | Ideal Outcome (Green Signal) | Red Flag (Needs Attention) |

| Adoption Rate vs. Target | ≥60% adoption achieved within first 90 days, indicating strong user buy-in | <60% adoption by Day 90, signaling resistance, poor UX, or weak change management |

| KPI Movement vs. Baseline | Core financial and operational KPIs show measurable positive movement from baseline | KPIs stagnate or decline, suggesting limited real impact or misaligned implementation |

| Unplanned Cost Discovery (TCAO) | No new Total Cost of AI Ownership categories uncovered; ROI projections remain stable | New cost layers surface (infrastructure, retraining, compliance), forcing downward ROI revision |

| Model Drift Assessment | Model accuracy remains consistent or improves with defined retraining cadence | Performance drops as production data shifts; no structured retraining or monitoring framework |

| Optimization Opportunity Identification | Additional high-value use cases identified, expanding ROI beyond original scope | No adjacent opportunities discovered; value realization capped at initial deployment scope |

How Do You Calculate AI ROI by Initiative Type?

There exist fundamentally different ROI curves, risk profiles, and measurement approaches associated with different types of AI initiatives. The CFO AI ROI evaluation framework should be tailored to the specific initiative type.

Generative AI ROI

ROI of generative AI is often overestimated in the short term and underestimated in the long term. The most common generative AI use cases, content generation, code assistance, document processing, and knowledge management, have clear productivity ROI profiles when measured correctly.

The key measurement discipline for generative AI is to distinguish between “time saved” and “value created.” Time saved can only be converted into financial ROI if it leads to a reduction in headcount, shifts the workforce to more valuable tasks, or speeds up time-to-market in a way that drives measurable revenue growth.

Generative AI ROI Formula

- GenAI ROI = (Hours Saved × Loaded Labor Rate + Revenue Impact of Redeployed Capacity + Error Reduction Savings) − TCAO

- A Fortune 500 legal department deploying contract review AI saved 4,200 attorney hours annually at $350/hour loaded rate = $1.47M gross savings against $380K TCAO = 287% ROI Year 1.

AI Chatbot ROI & Conversational AI ROI

A good ROI (Return on Investment) for a mid-sized company can be achieved with a correctly implemented custom intelligent chatbot solution. The payback period is 8-14 months, while ROI in Years 2-3 is above 300% as the model learns from historical data and increases deflection rates.

The ROI model for conversational AI deployments should include:

- Contact Deflection Value: (Number of deflected contacts × Average cost per human contact) = Gross Savings

- Resolution Quality Impact: First Contact Resolution improvement × Customer Lifetime Value impact

- 24/7 Availability Premium: Revenue captured from interactions outside business hours (particularly significant for e-commerce and financial services)

- Agent Augmentation Value: Even non-deflected contacts handled with the aid of AI have shown 20-35% lower handle times; this partial ROI is often not factored into the business case

A properly implemented custom intelligent chatbot solution can produce a strong ROI (return on investment) for mid-sized companies. Typically, payback occurs within 8-14 months of implementation, whereas Year 2-3 ROI exceeds 300% due to increased deflection rates and model learning from historical data.

Agentic AI ROI

Return on Investment (ROI) for Agentic Artificial Intelligence (AI) refers to autonomous AI systems performing multi-step tasks with a longer time to completion before any human users have to provide input; Agentic AIs are the only type of AI that accomplish more than any other type. The ROI for Agentic AIs (AI) will be at its highest/highest degree by 2026.

Traditional point-solution evaluation metrics cannot be used to assess the ROI of Agentic AI, since its ROI is realized by eliminating a current process rather than improving an existing one. Also, since traditional point solution models measure and replace labor, they do not accurately represent the ROI of Agentic AI because they fail to identify:

- Process Velocity Gains: With 24/7 agentic systems, cycle times are considerably shorter than in any workflow that a human could provide. Especially in the realm of FinTech application development, where supply chain finance, automated reconciliation, and clinical reviews are involved, the speed of decision-making is the primary driver of ROI.

- Error Cascade Prevention: In complex multi-step processes, agentic AI eliminates the downstream cost amplification of human errors, a value that is invisible in simple cost-per-task models.

- Scalability Without Linear Headcount Growth: The marginal cost of an additional agentic AI transaction approaches zero. For high-volume operations, this creates a fundamentally different cost curve than human-staffed processes.

AI/ML Outsourcing ROI

The ROI of AI/ML outsourcing differs from in-house ROI and is one that CFOs frequently miscalculate by failing to include the true cost of internal AI talent acquisition and retention in the comparison model.

A thorough make vs. buy analysis using AI for 2026 must take into account the cost of development within the company: cost of a senior ML engineer fully loaded ($280K to $420K per year in competitive markets), cost of a data scientist, MLOps infrastructure, hiring cost for AI staff (typically 30-45% of the first year’s salary), and the cost of 6-18 months of ramp-up time.

When you factor in the true internal cost of building and retaining an AI team, the outsourcing ROI becomes hard to ignore. This is especially true for AI-powered MVP initiatives. Instead of investing 12 to 18 months assembling talent, aligning architecture, and stabilizing delivery, CMARIX helps organizations move from prototype to shipping a full-scale AI system in just 3 to 6 months.

AI Software Development ROI Analysis

The ROI of AI software development differs significantly from that of traditional software development. CFOs may use traditional cost-reduction models to evaluate an AI project, but the ROI of AI is quite different from that of traditional software development, which focuses on feature development and delivery.

A realistic ROI evaluation for AI software development in 2026 has to account for the full lifecycle investment, not just the build phase.

That includes:

- Scalable AI architectures that enable future scalability for sustainable growth.

- Reliable data pipelines that enable the development of quality data.

- Models are trained, validated, and fine-tuned for strong performance.

- MLOps infrastructure is established to enable deployment and monitoring.

- Security, compliance, and governance controls that stand up to audit.

- Ongoing retraining and optimization to avoid model performance degradation.

Here’s the thing most teams miss: AI isn’t a one-time release. It’s a living system.

While traditional software undergoes some degradation, AI models undergo many more iterations. Reaching a certain level of accuracy does not immediately translate to business value. ROI is achieved through redesigning the workflow to leverage the intelligence layer and adopting it.

When organizations underestimate this compounding investment curve, ROI timelines extend beyond projections.

6 CFO Mistakes That Destroy Chances of Optimal AI ROI

Beyond the framework gaps, specific CFO behavior patterns consistently undermine AI ROI. Recognizing these patterns is as important as implementing the framework.

Mistake 1: Approving AI Without Funding Change Management

The failure of AI projects is always because people don’t use the systems properly, not because the systems are not good. If companies reduce their training and change program budgets, the adoption rate will decrease. The adoption rate is directly proportional to ROI. Technology can enhance systems, but behavior change is also required to witness financial success.

Mistake 2: Measuring Activity Instead of Impact

Measuring the number of tasks processed indicates activity. It doesn’t indicate value. Actual ROI is generated by increased revenue, decreased expenses, mitigated risk, or accelerated processes. If budget analysis is solely based on usage metrics, the actual business impact may not be captured.

Mistake 3: Treating ROI as a One-Time Calculation

AI results change over time. Data shifts. Markets change. Performance can improve or decline. When ROI is calculated only once at the beginning, future performance issues may go unnoticed. Regular financial reviews are needed to protect long-term value.

Mistake 4: Ignoring High Enterprise Failure Rates

The cost of the AI model itself is usually not one of the higher costs. Avoiding these cost overruns requires a dedicated AI expert who understands that most of the costs are associated with data preparation, infrastructure, integration, and long-term governance. When these costs are not properly estimated, budgets are exceeded, and ROI is impacted.

Mistake 5: Letting Technology Drive the Investment Thesis

When AI investments are driven by technical capability rather than business outcomes, ROI becomes unclear. Model performance alone does not guarantee financial or operational impact. Every AI initiative should start with defined business metrics, not with the choice of technology.

Mistake 6: Underestimating the True Cost Structure

The business model is a small expense. Data infrastructure, integration, monitoring, retraining, and governance are the major investments. Underestimating these will lead to cost overruns and reduced ROI.

The CMARIX Approach: Structuring AI Investment for Measurable Returns

CMARIX has designed its AI development and consulting approach specifically with the CFO AI ROI assessment framework outlined above in mind. Each project starts with a Business Value Architecture workshop to establish the financial foundation, KPI framework, and TCAO model before development.

This is because there is a deep-seated belief that the gap between AI capability and AI ROI is almost always a measurement and governance issue, not a technology issue. The greatest AI model in the world, without a financial framework for measurement, will not be able to show its value, no matter how well it performs.

Three principles define the CMARIX AI ROI methodology:

- Value Before Velocity: The speed of deployment is irrelevant if it does not deliver value. At CMARIX, we intentionally slow down the “pre-build” phase to ensure the measurement architecture is in place before the build phase begins.

- Transparent Cost Architecture: Every engagement includes a complete TCAO model that covers all future operational, retraining, and governance costs, preventing surprises for CFOs when budgeting after deployment.

- Outcome Accountability: CMARIX engages its clients in a way that allows for jointly accountable post-deployment ROI review points. Therefore, both parties are jointly responsible for achieving the desired business results, rather than just technical results.

CFO Guide to AI Investment Evaluation: 2026 Edition

Use this AI ROI evaluation checklist as a pre-approval governance tool for any AI investment above your organization’s materiality threshold.

| Phase | Control Area | Required Action / Validation |

| Strategic Alignment | Strategy Mapping | AI initiative maps to a named corporate strategic priority |

| Executive Ownership | Business sponsor identified and accountable for outcome | |

| Cost of Inaction | Competitive cost of inaction formally documented | |

| Alternatives Review | Non-AI solutions evaluated and rejected with rationale | |

| Compliance Clearance | Legal, regulatory, and compliance review completed | |

| Financial Architecture | Baseline Metrics | Pre-deployment baseline metrics documented with measurement sources |

| TCAO Model | Full Total Cost of AI Ownership model completed (development, infrastructure, operations, governance) | |

| Risk-Adjusted Value | Probability-weighted value scenarios completed | |

| Payback Analysis | Payback period calculated (conservative, base, optimistic cases) | |

| Contingency Reserve | Minimum 25% budget reserve established against base TCAO | |

| Implementation Governance | Quality Framework | Technical and business performance criteria defined |

| Data Readiness | Data quality assessment completed with remediation plan | |

| Change Management | Budget and execution plan approved | |

| KPI Infrastructure | Post-deployment KPI tracking system operational | |

| ROI Audit Plan | 30/90/180-day ROI audit schedule confirmed | |

| Post-Deployment Controls | Adoption Monitoring | Adoption rate dashboard active |

| Model Monitoring | Automated drift detection with alert thresholds | |

| First ROI Review | Day 30 ROI review scheduled | |

| Optimization Process | Continuous improvement backlog established | |

| Annual Audit | Annual AI ROI audit added to governance calendar |

Conclusion: The CFO as AI ROI Architect

2026 will see a divide between companies that view AI as an investment and those that are merely trend followers with no ROI. It is the CFO’s job not only to stop AI investment but also to ensure they have the proper tools to be accountable and achieve a return on investment.

The failure of AI ROI is never a failure of technology but a failure of governance and ROI measurement. Organizations fail not because models are ineffective but because success parameters, adoption, and financial measurement were never defined.

The solution isn’t to cut investment in AI, but to ensure stronger control, better analysis, and ROI modeling based on scenarios. If your board is asking how to start an AI project? with guaranteed returns, CMARIX works in collaboration with CFOs and CTOs to develop programs designed for profitability from day one.

AI ROI Abbreviations Used in This Guide

| Abbreviation | Full Form |

| Gen AI | Generative AI |

| TCAO | Total Cost of AI Ownership |

| MLOps | Machine Learning Operations |

| GPU | Graphics Processing Unit |

| API | Application Programming Interface |

| FCR | First Contact Resolution |

| CLV | Customer Lifetime Value |

| PoC | Proof of Concept |

| NLP | Natural Language Processing |

| LLM | Large Language Model |

| RPA | Robotic Process Automation |

| SaaS | Software as a Service |

FAQs on AI ROI Framework

Can custom AI software improve business ROI?

Yes. Customized software for AI has the capability to address particular operational bottlenecks, remove inefficiencies, improve forecasting, and automate high-cost workflows, resulting in increased productivity, optimized decision-making, and improved financial returns over the long-term, which are aligned with business strategies.

Why do most AI investments in finance fail to show clear ROI?

Most artificial intelligence initiatives in the finance industry fail due to unclear objectives, poor data management, a lack of stakeholder alignment, an underestimation of the complexity of integrating the technology, and the absence of key performance indicators.

Is AI software scalable for enterprise use?

Enterprise-class AI systems have been developed with modular architectures, cloud-native infrastructure, and robust data pipelines, allowing companies to scale models across departments with high performance and controls, as well as to integrate with existing enterprise systems.

How does data quality and availability impact the accuracy of AI ROI measurements?

The ROI of AI depends on the quality of the data, which must be clean, structured, and accessible. Poor data quality will skew the AI model’s output, distorting its performance metrics and making it difficult to measure the AI ROI or justify the investment in the AI model.

Why hire a professional AI software development company?

An effective professional services company for AI development will help improve strategic alignment, strong architecture, model validation, compliance, and scalability, thereby reducing implementation risk, accelerating time-to-value, and ensuring measurable results.